2.0 Cataloging & Classification

Upper Matter

Introduction

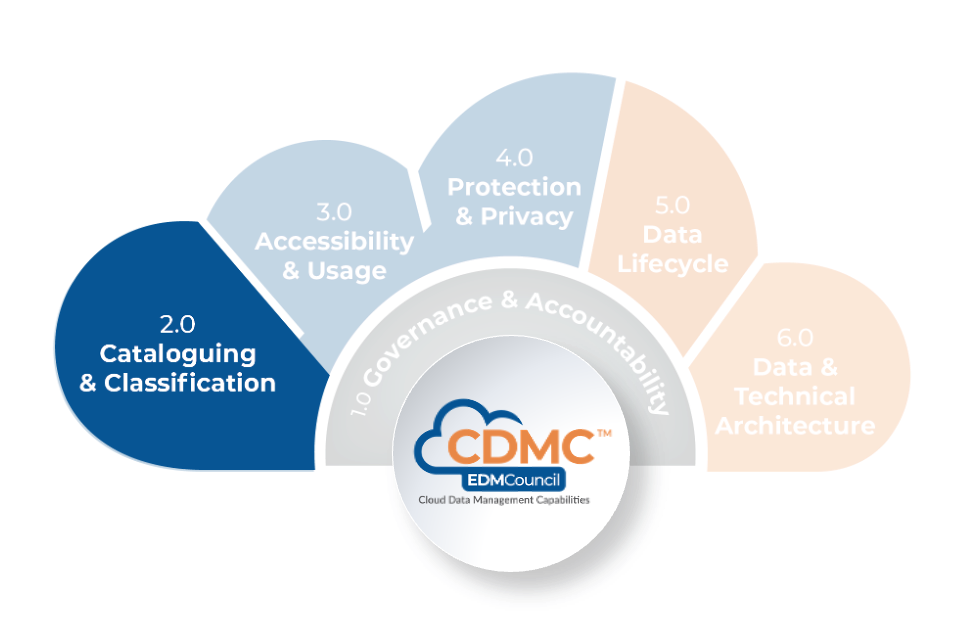

Effective cloud data management depends on having full control of all data assets. This understanding must include technical characteristics such as formats and data types and contextual information supporting the full CDMC capabilities. These capabilities include business definitions, classifications, sourcing, retention, physical location and ownership details. Together, these characteristics comprise the data catalog.

Description

The Data Cataloging & Classification component is a set of capabilities for creating, maintaining and using data catalogs that are both comprehensive and consistent. This component includes classifications for information sensitivity. These capabilities ensure that data managed in cloud environments is easily discoverable, readily understandable and supports well-controlled, efficient data use and reuse.

Scope

- Define the scope and granularity of data to be cataloged.

- Define the characteristics of data as metadata.

- Catalog the data and the data sources.

- Connect the metadata among multiple sources.

- Share metadata with authorized users to promote discovery, reuse and access.

- Enable sharing of metadata and data discovery across multiple catalogs, platforms and applications.

- Define, apply and use the information sensitivity classifications.

Overview

Understanding data assets in context becomes central when managing those assets through infrastructure controlled by the cloud provider instead of the data organization. Understanding how a cloud provider will control data assets is critically important in regulatory requirements such as data residency and protection.

An essential task in structuring a data catalog is defining an appropriate granularity and breadth of data assets to include in the catalog. Care must be taken to assess the overall costs of cataloging driven by legal and regulatory constraints and the amount of data in the cloud. It is essential to compare these costs with the value to the organization.

Comparatively, some challenges of implementing data catalogs may be smaller in a cloud computing environment. Consider the following:

- Since a cloud environment tracks all data assets that it stores for metering and security purposes, the cloud environment provides a necessary foundation for cataloging that does not exist in many on-premises environments.

- Cloud platforms host relatively few types of data stores. These data types typically offer contemporary methods of integration, such as APIs. Conversely, a typical on-premises data landscape exhibits a much wider variety of data stores, including legacy technologies that may pose intractable challenges to data catalog integration.

- Given the near-instant availability of cloud infrastructure, potential time-to-market advantages may be realized by cataloging unstructured data with the aid of natural language processing. Other types of data may be easier to find through ontological discovery and exploration.

In maintaining a hybrid cloud environment that spans both on-premises and multiple cloud platforms, an organization will likely need to manage multiple data catalogs across multiple data storage technologies. Automation and cross-platform alignment will be critical to the interoperability of these disparate technologies.

Information sensitivity classification involves labeling data elements according to their business value or risk level. Data presents a ‘business risk’ if its disclosure, unauthorized use, modification or destruction could impact strategic, compliance, reputational, financial, or operational risk. This labeling is fundamental for security and regulatory compliance in all environments, especially diverse cloud environments with multiple suppliers. With multiple suppliers, data is likely to traverse multiple local or regional jurisdictions in a single workflow.

Implementation of information sensitivity classification in a cloud computing environment is essential to realizing these benefits:

- Availability of new functionality. Cloud technology provides new functionality, opportunities and approaches for data storage, management, access, cataloging, classification, movement, processing, archiving and permanent deletion. Integrating information sensitivity classification with this new functionality is vital to support a cohesive and seamless solution.

- Increased potential for automation. Information sensitivity classification provides a foundation for defining business rules that can consistently apply data usage, placement, encryption, distribution, and access across various legal or regulatory requirements.

Understanding the purpose and importance of Information sensitivity Classification must be cultivated across an organization through people, processes and technologies.

In addition to information sensitivity classification, organizations may apply additional classifications to support specific business rules and precisely manage various data treatments throughout the entire data lifecycle. A primary assumption is that all information sensitivity and other classifications will be captured as metadata in the data catalog. For an exhaustive list, refer to the CDMC Information Model.

Metadata within a catalog may contain sensitive information making it important to treat metadata itself as a data asset. Each metadata element should have an information sensitivity classification and be controlled by good data management practices such as access control and sensitivity tracking.

When it comes time to create that data catalog, all cloud data assets should be known. Also, each information security and data privacy risk should be known. This knowledge is vital to adopting and supporting the information sensitivity classification schemes that will control how to access, protect and manage the data through each stage in the data lifecycle.

Value Proposition

Organizations that create, maintain and share comprehensive data catalogs gain the ability to maximize controlled reuse of data assets.

Organizations that effectively support the information sensitivity classifications can benefit from enhancements in transparency and consistent treatment of data classifications. Maximum transparency on the precise locations of data storage and data transfer routes will enable automatic processes to manage, monitor and enforce the consistent data treatment according to a specific information sensitivity classification. Standardizing an information sensitivity classification functionality also enables an authorized automatic process to manage, monitor, enforce security and regulatory compliance across multiple jurisdictions. In some instances, information sensitivity classification applies to additional classification metadata.

Core Questions

- Is there a definition of data asset scope and granularity for all data that will be cataloged?

- Is there agreement on a model and supporting standards for data characteristics to be captured as metadata?

- Is there a plan for connecting the metadata across multiple data catalogs?

- Is the metadata available to users and applications to promote discovery and reuse?

- Have standards been adopted that facilitate sharing metadata and data discovery across catalogs, platforms applications?

- Has an information sensitivity classification system been defined, supported in the data catalogs and used to control data access and use?

Core Artifacts

- Data cataloging strategy and scope

- Metadata information model and naming standard

- Inventory of platforms and applications to support data catalog interoperability

- System interface definitions for machine-readable access to metadata in the catalog

- Interchange protocols for controlling the sharing and modification of metadata across platforms

- Data Management Policy, Standard and Procedure – defining and operationalizing the information sensitivity classification scheme and corresponding business rules

2.1 Data Catalogs are Implemented, Used and Interoperable

Data catalogs describe an organization's data as metadata, enabling it to be documented, discovered and understood. The data cataloging scope and approach must be defined. Catalogs must be implemented and populated with the metadata that describes the data. This metadata must be exposed to both users and applications, and standards should be defined and adopted to ensure that metadata can be exchanged between catalogs on different platforms.

2.1.1 Data Cataloging is defined

Description

Data cataloging is the process of collecting, organizing, and displaying metadata that pertains to data assets and is presented in a data catalog. Effective data management in a cloud computing context depends on a clear understanding of metadata that describes the content, source, ownership and other aspects of the data assets. Data catalogs describe technical information about the data assets, such as formats and data types. These catalogs also include contextual information such as classification, ownership, and residency requirements. Business and regulatory requirements define the scope of data assets managed by a particular data catalog in all data storage environments.

Objectives

- Define the scope of the data to include in the catalog.

- Align each catalog with the business strategy and in consideration of its risk appetite and control framework.

- Define the granularity and types of data assets that will be part of the catalog.

- Define the key characteristics of all data assets, including relationships among them.

- Define how metadata is sourced.

- Provide a catalog definition and scope that will enable data consumers to find and understand data assets easily.

Advice for Data Practitioners

The purpose of data cataloging is to provide a means for fully understanding information about all business data assets. The data catalog is the repository for identifying, understanding and managing all data assets. In addition, the data catalog supports ethical, legal, and regulatory compliance issues—for both individuals and processes.

Data cataloging involves a level of effort and cost. It is important to develop and implement a data cataloging strategy as part of an overall data strategy. This strategy must include regulatory requirements, business needs, and ethical considerations. It must clearly describe the value to be achieved. While it is important to inventory and document all data assets, the granularity of the descriptions and method of contextualization will vary according to the business value.

The business value criteria should define the Key Performance Indicators (KPIs) for revenue generation or cost reduction and the Key Risk Indicators (KRIs) to mitigate risk to the data assets. The scope may include any data that the organization requires, including data from internal and external sources.

Examples of metadata that data catalogs maintain include business terminology, technical metadata such as data types, formats, technical containment, and data models. Metadata may also include information about data services such as Application Programming Interface (APIs), business domains, ownership, licensing, and data movement. In addition, metadata may describe data stored in various forms: structured, unstructured and semi-structured.

Always on data cataloging is essential for capturing sufficient metadata about each in-scope data asset. In addition, processes and technologies must be readily available for data specialists to perform data cataloging operations. Also, it is vital to define principles and capabilities for automatically discovering the minimum metadata for each in-scope data asset—either at the point of entry or the point of creation. All data definition and data management technology must support these principles. Note that a minimum level of data cataloging capability does not impact the availability or security of the contents of the data catalog. It may be entirely impracticable for human agents to maintain elements of the catalog. Consequently, automatic metadata discovery and capture are important for efficiency and scale.

To develop a flexible, descriptive, and efficient cataloging service, an organization may implement one or more data catalog technologies. It is important to ensure compatibility and consistency with on-premise, hybrid and multi-cloud environments when evaluating different offerings.

Advice for cloud service and technology providers

All data within a cloud environment must be inventoried, at least to a level of granularity that will support usage metering. For each data asset, some minimum amount of evidence must be available to be included in an inventory. Some evidence may be available in some environments, but it may be insufficient to capture the minimum amount of metadata for the data catalog. It should be feasible to enrich such evidence with additional contextual metadata or integrate it into a data catalog to support business and regulatory requirements. Tools that feature sufficient interoperability will capture minimal levels of evidence. Refer to CDMC 2.1.3 Data catalogs are interoperable across multi and hybrid cloud environments.

Data sensitivity and ethics are key considerations when dealing with data assets managed in a cloud computing environment. For such data assets, the metadata itself may contain sensitive personal data or other secrets. In these cases, the metadata is itself a data asset, and its treatment must follow data management policies like access control and sensitivity tracking. Catalog interoperability becomes a very important requirement for ensuring consistent and secure metadata management across all data catalogs when managing sensitive metadata.

Questions

- Has a data catalog strategy been defined, published, and communicated to the stakeholders?

- Has the data catalog strategy been implemented?

- Have data cataloging policies, standards and procedures been defined, verified, sanctioned, and published?

- Do the policy, standards, and strategy documents identify the scope of data assets and metadata?

- Does this scope align with the business objectives and strategy?

- Have technologies been chosen to support data cataloging by capturing and maintaining metadata for in-scope data assets?

- Has data catalog governance been aligned with current change-management and data-management policies?

Artifacts

- Data Cataloging Strategy and Scope

- Data Management Policy, Standards and Procedures – defining and operationalizing data cataloging

- Data Catalog Implementation Roadmap

- Data Catalog Architecture Document

Scoring

Not Initiated

No formal data cataloging exists.

Conceptual

No formal data cataloging exists, but the need is recognized, and the development is being discussed.

Developmental

Formal data cataloging is being developed.

Defined

The formal data cataloging is defined and validated by stakeholders.

Achieved

Formal data cataloging is defined, scoped and used by the organization.

Enhanced

Formal data cataloging is established as part of business-as-usual practice with continuous improvement.

2.1.2 Metadata is discoverable, enriched, managed, and exposed in data catalogs

Description

A data catalog promotes efficient data reuse by describing underlying data with metadata. Good metadata properly identifies, documents and elaborates on data elements available across an entire cloud data architecture. Effective metadata management and enrichment promote efficient data reuse. Examples include capturing the key data residency, classification, ownership, licensing and data protection of cloud data assets. Automating metadata harvesting and definition is vital to scale metadata management efforts across extensive cloud data architectures and on-premises platforms.

Objectives

- Define key metadata management capabilities in a data catalog, so underlying data is readily discoverable, highly organized, well-managed, open to enrichment and easily consumed by humans or by an automatic process.

- Source metadata and connect it to other data assets within the catalog to assist data management processes, improve usability and enhance the data efficacy.

- Correlate data assets to identify commonality, minimize duplication and promote contextual discovery.

- Promote the reuse of data assets by readily exposing metadata through the catalog.

- Ensure quick definition of any mandatory metadata, and correlate that metadata with underlying data assets. Metadata should include, for example, semantic meanings for data elements that support regulatory compliance.

Advice for Data Practitioners

Wherever possible, metadata should be harvested automatically from as many data assets as possible. Metadata from internal and external sources should be kept up-to-date and immediately accessible within the data catalog. Automation is essential to efficient and effective maintenance of the data catalog. However, it is important to understand that automatic discovery may only provide a portion of the necessary metadata, which is the case with technical metadata. Consequently, methods must be available to create and enrich metadata manually. These methods must be governed with appropriate controls and are especially important when it is known that automatic discovery is unavailable or insufficient.

Promoting and implementing data reuse depends on the ability to find relevant data quickly. Metadata should be easily searchable and accessible at a suitable level of granularity—either by an individual or process.

The discovery of semantic relationships among various data assets and the ability to find related data assets promotes data usage. It is important to automate the collection and discovery of relationships among data assets and other metadata wherever possible. The need to automate is especially true for large, complex systems involving multiple data stores. Also, it should be possible to link and enrich metadata manually. To further promote data asset reuse, consider collaborative enrichment of data assets, such as tagging, commenting, rating, bookmarking, notifications and workflows.

The capture of metadata to support data management should be practiced through all data life cycle stages. Captured metadata includes design changes, implementation and extension of data stores and deployment of data assets. The data catalog should automatically define, capture, relate, and share metadata if it is practical. Examples include:

- Metrics, KPIs and SLAs and data ownership – to support data profiling and quality management. Refer to CDMC 5.2.2 Data quality is measured, and CDMC 5.2.3 Data quality metrics are reported.

- Classification of data properties – to specify sensitivity. Refer to CDMC 2.2.1 Data classifications are defined.

- Capture of provenance information, including data origin and footprint – to support tracing and authoritative sourcing. Refer to CDMC 1.3.1 Data sourcing is managed and authorized, and CDMC 6.2.1 Multi-environment lineage discovery is automated.

- Lifecycle metadata – to manage dataset maturity and enable records retention, archival, disposal policy. Refer to CDMC 5.1.1 A data lifecycle management framework is defined.

- Usage metadata – to audit access, purpose, sharing and ethical use of data. Refer to CDMC 3.1 Data Entitlements are Managed, Enforced and Tracked, and CDMC 3.2 Ethical Access, Use & Outcomes of Data are Managed.

NOTE: The list above is a summary guide to implementing data cataloging, so it is not exhaustive. Keep in mind that the data catalog provides a convergence point for all of this metadata.

The data catalog should support an information model and naming standards that satisfy the cataloging requirements of the adopting organization. The catalog should also support interoperability with other data catalogs and other cloud and on-premises capabilities. Refer to CDMC 2.1.3 Data catalogs are interoperable across multi and hybrid cloud environments.

The data catalog should offer the ability to maintain multiple versions of metadata, track user actions for auditability and maintain a history of metadata to support point-in-time inquiries. Each of these capabilities is important for regulatory and compliance purposes.

Advice for cloud service and technology providers

The metadata should be captured alongside the data to ensure it remains up-to-date. Beyond basic technical details, it is important to know the broader metadata requirements noted above in ADVICE FOR DATA PRACTITIONERS. Verify that it will be possible to add this additional metadata into the data catalog or integrate basic metering metadata. Interoperability support will make it much easier to integrate. Refer to CDMC 2.1.3 Data catalogs are interoperable across multi and hybrid cloud environments.

Questions

- Have business and technical users been engaged to define cataloging capabilities, including any ethical concerns?

- Have capabilities been implemented for automatic discovery and enrichment of metadata?

- Are relationships actively maintained among the metadata, for example, between conceptual terminology and physical data elements?

- Are changes in data and metadata captured, the changes logged, user actions logged and all critical changes monitored for auditing purposes?

- Are operational Key Performance Indicators (KPIs), metrics and Service Level Agreements (SLAs) defined, produced and regularly shared to improve cataloging efficiency and effectiveness?

Artifacts

- Data Cataloging Principles and Strategy

- Data Catalogs

- Data Catalog Capabilities Implementation Roadmap

- Data Catalog Capabilities Release Notes and Schedule

- Data Catalog Capabilities Communication, Training and Adoption Plan

- Data Catalog Usage Metrics

- Metadata Refresh Log

Scoring

Not Initiated

No formal standards exist for metadata discovery, enrichment, management and exposure.

Conceptual

No formal standards exist for metadata discovery, enrichment, management and exposure, but the need is recognized, and the development is being discussed.

Developmental

Formal standards for metadata discovery, enrichment, management and exposure are being developed.

Defined

Formal standards for metadata discovery, enrichment, management and exposure are defined and validated by stakeholders.

Achieved

Formal standards for metadata discovery, enrichment, management and exposure are established and adopted by the organization.

Enhanced

Formal standards for metadata discovery, enrichment, management and exposure are established as part of business-as-usual practice with a continuous improvement routine.

2.1.3 Data catalogs are interoperable across multi and hybrid cloud environments

Description

Data catalog interoperability is an important capability in multi-cloud or hybrid-cloud environments. Catalogs should provide the ability to share information across different cloud service providers, technology providers and on-premises catalogs.

Enabling data catalog interoperability between platforms and applications is achieved by defining structure using:

- Catalog metadata naming standards and a catalog information model for data sets.

- Relationships between data sets.

- Data services.

Establishing these standards and models is essential to integration and consistency when sharing or combining catalogs. Maintaining these standards and models is vital to metadata automation, data governance, quality monitoring, data asset policy enforcement and compliance capabilities for usage tracking.

Also, data catalog interoperability is enhanced by implementing system-level interface standards such as APIs that work with interchange protocols that support mutability, mastering and synchronization.

Objectives

- Facilitate the sharing and use of common metadata across catalogs, platforms and applications for easy access or automatic synchronization.

- Enable a common and consistent understanding of underlying data across multiple platforms and applications.

- Support automatic enforcement of metadata policies such as data access controls across multiple platforms and applications.

- Support automatic enrichment of metadata, including data quality analysis and monitoring across multiple platforms and applications.

- Enable discovery of accessible data across multiple catalogs, platforms and applications.

Advice for Data Practitioners

Automatic discovery and maintenance of metadata are necessary to support always-on functionality and interoperability between catalogs, applications, and workflows. Refer to CDMC 2.1.2 Metadata is discoverable, enriched, managed, and exposed in data catalogs.

It is necessary to create naming standards and a metadata information model based on widely adopted open standards to support machine-readability by third-party platforms and applications that drive automation of data catalog metadata. Automatic synchronization with open standards changes ensures that the data catalog does not diverge from those standards.

The alignment of information models requires the definition of standard metadata types. These definitions describe how underlying data assets are to be defined and described. Such definitions will ensure consistency across multiple catalogs and the applications that use those catalogs. Refer to CDMC Information Model for further advice on the model. Adopting naming standards and a consistent information model requires business, data, technology, and regulatory compliance stakeholders to ensure a common understanding and consensus. Information model alignment supports automation in metadata enrichment, knowledge graph exploration, data lineage, data marketplaces, recommendation engines, data governance policy monitoring and enforcement, data quality monitoring, user access controls, usage tracking and compliance tracking and reporting.

After establishing an information model and catalog naming standards, these will need to be maintained through policies requiring the organization to abide by the standards. Any adjustments to the naming standards or the information model should follow the change management requirements defined by the data governance framework. These requirements may include change approvals, impact analysis, controlled implementation and rollout.

The best practices described in CDMC 1.2 Data ownership is Established for Both Migrated and Cloud-generated Data apply to metadata, such as establishing ownership for authoritative data sources and data sharing agreements on metadata that support multiple catalogs.

Data catalog security is essential for interoperability and also alignment with metadata entitlement, privacy and security policies.

While interoperability makes it possible to share metadata, the data consumer's responsibility is to manage the ethical sharing of metadata across multiple platforms. It is particularly important to limit the sharing of commercially sensitive catalog information between cloud service providers. For example, data metrics and usage information from one provider should not be shared with another.

The best practices described in CDMC 3.0 Data Accessibility and Usage apply to metadata entitlements.

Advice for cloud service and technology providers

Establishing open naming standards and open information models is important to ease integration among platforms and reduce adopters' burden.

Establishing a system-level interface that specifies how platforms and applications access metadata assets within the data catalog is essential to drive automation. Examples of a system-level interface include an API, event-based mechanisms and semantic web traversals. Interchange protocols should also be maintained through policy to ensure that shared information is properly managed and risks are minimized for losing the source of truth, such as inconsistent changes in multiple locations.

The cloud service provider must supply transparency on the treatment of metadata to enable organizations to ensure proper isolation between cloud platform tenants. Therefore, any metadata shared through these interoperability mechanisms can be strictly controlled through entitlement, privacy and security policies.

Questions

- Have naming standards been established for all data catalogs?

- Are catalog naming standards consistent and in proper alignment?

- Has an information model been created or adopted for catalog data asset definitions?

- Are data catalogs portable or readable by external applications?

- Is there documentation and tooling for onboarding a new external application to read the current catalogs?

- Is there a procedure for onboarding a new catalog that is to be readable by external applications?

- Is there a procedure for migrating existing catalogs?

- Are there proper controls to govern metadata changes to maintain the source of truth for the metadata?

Artifacts

- Catalog Metadata Naming Conventions

- Metadata Information Model and Naming Standard (Refer to the CDMC Information Model)

- Data Catalog Interoperability Technology Tool Stack

- System Interface Definitions – for machine-readable access to metadata in the catalog

- Interchange Protocols – for controlling the sharing and modification of metadata across platforms

Scoring

Not Initiated

No formal standards exist for data catalog interoperability.

Conceptual

No formal standards exist for data catalog interoperability, but the need is recognized, and the development is being discussed.

Developmental

Formal standards for data catalog interoperability are being developed.

Defined

Formal standards for data catalog interoperability are defined and validated by stakeholders.

Achieved

Formal standards for data catalog interoperability are established and adopted by the organization.

Enhanced

Formal standards for data catalog interoperability are established as part of business-as-usual practice with continuous improvement.

2.2 Data Classifications are Defined and Used

From the very moment it is created, data can be both a liability and an asset. Poorly managed data is likely to pose a risk if used inappropriately or unauthorized users access it. Such risks increase in a cloud environment, and many organizations increase their exposure as they move massive amounts of critical data into the cloud.

An information sensitivity classification is a scheme for labeling data elements according to business risk level or value. Data presents a business risk if its disclosure, unauthorized use, modification or destruction could impact a strategic, compliance, reputational, financial, or operational risk. Information sensitivity specifies how to access, treat and manage a data element through each stage of its lifecycle. This labeling is essential to security and regulatory compliance in all applications and a growing portion of cloud environments.

2.2.1 Data classifications and defined

Description

The information sensitivity classification is defined and approved. Business rules that specify how the classifications apply to combinations and aggregations of individually classified data are defined and approved.

Objectives

- Define information sensitivity classifications within the data management policy such that the classifications are mutually exclusive and accurately reflect business risk levels and values.

- Define business rules to ensure consistent application of order of precedence for information sensitivity classification.

- Define business rules for classifying combinations of data elements. Some combinations of data elements will have a collective sensitivity that is greater than the individual data elements.

- Define business rules for aggregating the classifications of individual data elements to be held in a repository or moved to an application.

- Define business rules for treating unclassified data or setting a default information sensitivity classification for individual data elements.

- Define principles and guidelines that anticipate changes to data classification at some point in the data lifecycle.

Advice for data practitioners

The purpose of information sensitivity classification is to identify the business risk and value of data. Information sensitivity classifications also constrain data accessibility and handling by data management, security and downstream business processes.

Set a policy and guidelines that enable fast and intuitive decisions concerning the classification of applications, documents, messages and files. Define and use labels and terms that are instantly recognizable and meaningful. Keep the number of different identifiers to a minimum to promote simplicity and consistency. An information sensitivity classification can be:

- Derived according to the content. Users may be required to identify the content type at the time of creation, or the capability may exist to analyze content to determine or constrain the classification.

- User-driven. Users may be required to choose the appropriate classification.

The guidelines should include how to detect and update changes. Potential conflicts between automatic assignment and manual user assignment must reconcile and cascade across all repositories.

Other data classification types may be necessary to support additional regulatory compliance requirements, risk reporting and specific business objectives. The assumption is that the use of data will be by metadata maintained in the data catalog, including the information sensitivity classification.

Implementing any information sensitivity classification scheme must align with the ethical review of the data access, use, and outcome. Refer to CDMC 3.2 Ethical Access, Use, & Outcomes of Data Are Managed.

Information sensitivity classifications must be mutually exclusive, where applicable. For example, the same data element cannot be simultaneously sensitive and public. There must be rules in place to ensure consistent application of order of precedence for information sensitivity classification. The order of precedence determines that the higher level applies when a conflict arises.

Formalize and document information sensitivity classification and any accompanying rules for the appropriate level of protection. These additional rules might govern whether an information sensitivity classification label is itself to be protected or otherwise obfuscated to protect the sensitivity of the underlying data elements.

If required by the data management policy, define and implement rules that specify a more stringent classification. For example, consider a simple database consisting of three tables, each with a confidential classification. Data that is accessed from all three tables simultaneously may be constrained with a highly confidential classification. For example, aggregation may render the sensitivity of the repository greater than any of the individual data elements. Another example is the three data elements, First Name, Last Name and Home Address, may not be sensitive individually. Still, these elements in combination likely identify a specific person and consequently is Personally Identifiable Information (PII).

Advice for cloud service and technology providers

Whether on-premises or in cloud environments, information sensitivity classification is important to data and security management. However, a cloud environment may have a more complex control framework.

A suitable cloud environment should provide metadata functionality for each distinct data storage entity within that environment. This functionality must permit the assignment of an information sensitivity classification value for each data element supporting cross-organization control function policies.

Questions

- Has a unique and precise information sensitivity classification scheme been defined and approved?

- Has the classification scheme been integrated with the cross-organization control function policies?

- Has it been embedded within the culture and aligned with data governance architecture?

- Have business rules been established to guide the classification of data element combinations, and do these rules also guide the inheritance of classifications to higher-level repositories and systems?

Artifacts

- Data Management Policy, Standard and Procedures – defining and requiring the assignment of information sensitivity classification schemes and corresponding business rules

- Information Sensitivity Scheme Specification

Scoring

Not Initiated

No formal data classification schemes and business rules exist.

Conceptual

No formal data classification schemes and business rules exist, but the need is recognized, and the development is being discussed.

Developmental

Formal data classification schemes and business rules are being developed.

Defined

Formal data classification schemes and business rules are defined and validated by stakeholders.

Achieved

Formal data classification schemes and business rules are established and adopted by the organization.

Enhanced

Formal data classification schemes and business rules are established as part of business-as-usual practice with continuous improvement.

2.2.2 Data classifications are applied and used

Description

An information sensitivity label must be assignable to all individual data elements and collections and aggregations of data elements where possible. An information sensitivity label is useful for controlling data access, treatment, and management in each data lifecycle stage.

Objectives

- Implement classification schemes across all on-premises and cloud data assets.

- Implement classification at the point of creation.

- Support classification with technology that analyzes the content and continually assigns or guides classification decisions.

- Provide users the ability to validate any automatic classification processes.

- Configure downstream data governance and security solutions to apply information sensitivity classifications as the basis for jurisdictional placement, encryption, distribution, access and usage.

Advice for data practitioners

Automating the information sensitivity classification processes promotes consistency of classifications.

Classification is always required and must be always on. Best practices in cloud data management involve establishing the appropriate data classifications before ingesting data into the cloud environment. An organization must define rules for setting default classification for new data elements and any future data changes to achieve this. In addition, rules must be established on how to handle unclassified data. Whenever possible, the application of these rules should be automatic.

Advice for cloud service and technology providers

Cloud environments should provide the ability for authorized users or services to:

- Inspect the classification of a specific data entity.

- Assign or modify the classification of a specific data entity.

- With any specific parent data entity, consult the information sensitivity classification values list for all child data entities (with the option to limit the depth to lower hierarchy levels).

Questions

- Is classification captured consistently at the point of data element creation?

- Is it possible to modify classification accordingly when data changes during the data lifecycle?

- Are classifications before integration of new applications and datasets in the cloud environment evidenced?

- Has technology been implemented to detect and assign data types to improve the quality and consistency of information sensitivity classification?

- Is information sensitivity classification utilized within all business processes and systems?

- Does the information sensitivity classification serve as the foundation for access, usage, security at rest and in motion, storage, transport, sharing, archival and data destruction?

Artifacts

- Data Catalog Report – evidence of assigned information sensitivity classification at all points of creation across the application and data landscape

- Classification Recommendation Log – evidence produced by technology that analyzes business content to guide users or specify sensitivity classification of information

- Data Usage Log – evidence that downstream systems and business applications utilize the information sensitivity classification scheme as the basis for usage, jurisdictional placement, encryption, distribution and access

- Change Management Standard – evidence that classification definition and application considerations are integral to the approvals in conventional or agile software development lifecycles

Scoring

Not Initiated

No formal standards exist for the application and use of data classifications.

Conceptual

No formal standards exist for the application and use of data classifications, but the need is recognized, and the development is being discussed.

Developmental

Formal standards for the application and use of data classifications are being developed.

Defined

Formal standards for the application and use of data classifications are defined and validated by stakeholders.

Achieved

Formal standards for the application and use of data classifications are established and adopted by the organization.

Enhanced

Formal standards for the application and use of data classifications are established as part of business-as-usual practice with continuous improvement.

2.3 Cataloging & Classification - Key Controls

The following Key Controls align with the capabilities in the Cataloging & Classification component:

- Control 5 – Cataloging

- Control 6 – Classification

Each control with associated opportunities for automation is described in CDMC 7.0 – Key Controls & Automations.

Key Control 5

| Control 5: Cataloging | |

Component |

2.0 Cataloging & Classification |

Capability |

2.1 Data Catalogs are Implemented, Used, and Interoperable |

| Control Description |

Cataloging must be automated for all data at the point of creation or ingestion, with consistency across all environments. |

| Risks Addressed |

The existence, type and context of data are not identified, resulting in the inability of all other controls to be applied that are dependent on the data scope. Data is uncontrolled and consequently is at risk of not being fit for purpose, late, missing, corrupted, leaked and in contravention of data sharing and retention legislation. |

| Drivers / Requirements |

Organizations must ensure the necessary controls are in place for large or complex workloads that involve sensitive data such as client identifiers and transactional details. Knowledge of all data that exists is foundational to ensuring that all sensitive data has been identified. |

| Legacy / On-Premises Challenges |

Organizations cannot scan and catalog the significant variety of data assets that exist in legacy on-premises environments. Without comprehensive catalogs of all existing data, organizations cannot be confident that all sensitive data within their data assets have been identified. |

| Automation Opportunities |

|

| Benefits |

An organization can guarantee that all data has been cataloged and can use this as the foundation on which to automate and enforce controls based on the metadata in the catalog. |

| Summary |

This is the infrastructure describing what data exists, to see how much there is and how many different types there are. It is the foundation of all the other controls. |

Key Control 6

| Control 6: Classification | |

Component |

2.0 Cataloging & Classification |

Capability |

2.2 Data Classifications are Defined and Used |

| Control Description |

Classification must be automated for all data at the point of creation or ingestion and must be always on.

|

| Risks Addressed |

Sensitive data is not classified, resulting in the inability of all other controls to be applied that are dependent on the classification. Data is uncontrolled and consequently is at risk of not being fit for purpose, late, missing, corrupted, leaked and in contravention of data sharing and retention legislation. |

| Drivers / Requirements |

Information sensitivity classification (ISC) is required by most organizations’ information security policies. An organization is required to know whether data is highly restricted (HR), classified (C), internal use only (IUO), or public (P), and if it is sensitive. Knowing whether data is sensitive is the foundation of most other controls in the framework. This requires certainty that all data has been cataloged and certainty that the sensitivity of the data has been determined. |

| Legacy / On-Premises Challenges |

The variety of data assets in legacy environments impacts the ability to ensure that all data has been identified. Sensitive data may exist in data assets that have not been identified. Classification of data assets is often manual and can be both error-prone and expensive. Even where assets are identified, there may be gaps or errors in the classification. The proliferation of copies of data in legacy environments can lead to classifications in data sources not being carried through to copies of the data. |

| Automation Opportunities |

|

| Benefits |

The operations team that is responsible for classifying data is expensive. Auto-classification can significantly streamline and reduce the amount of manual effort required to perform this function. |

| Summary |

Auto-classification of data provides confidence that all sensitive data has been identified and can be controlled. |