5.0 Data Lifecycle

Upper Matter

Introduction

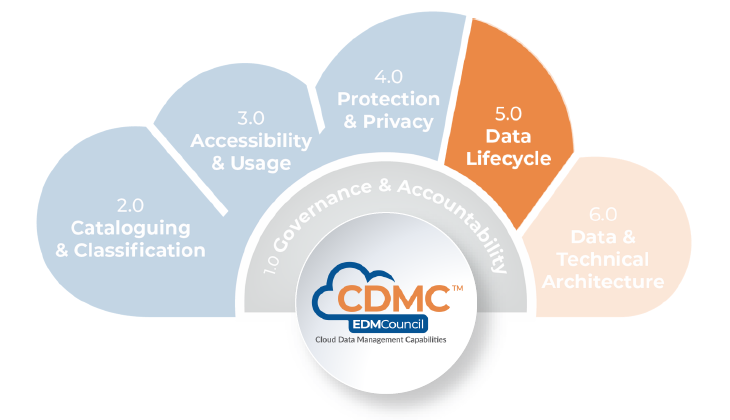

A data lifecycle describes the sequence of stages data traverses, including creation, usage, consumption, archiving and destruction. Data may reside in or move through cloud environments at any of these stages. It may be consumed and used at different stages in different environments. Practitioners must apply proper data management and data management controls across all lifecycle stages to maintain data quality and consistency.

Description

The Data Lifecycle component is a set of capabilities for defining and applying a data lifecycle management framework and ensuring that data quality in cloud environments is managed across the data lifecycle.

Scope

- Define, adopt and implement a data lifecycle management framework.

- Ensure that data at all stages of the data lifecycle is properly managed.

- Define, code, maintain and deploy data quality rules.

- Implement processes to measure, publish and remediate data quality issues.

Overview

A data lifecycle management framework supports effective management of data assets in an organization, beginning with creation or acquisition, and continuing through use, maintenance, archiving according to business need, and disposal. A well-designed data lifecycle management framework will ensure that the most useful and recent data is readily accessible. It can enable storage cost efficiencies as more data becomes obsolete employing automatic migration to various storage tiers. A solid framework also includes rules for automatic archiving and disposal of data. In addition, data tagging can be used to manage various exceptions to the data lifecycle, such as enforcing the retention of data that is subject to legal holds or preservation orders.

A data lifecycle management framework formalizes the different phases and activities of a data lifecycle. Data must be managed consistently throughout the data lifecycle regardless of whether the data resides or how it is used in a cloud or on-premises environment. To ensure compliance with legal and regulatory requirements, an organization needs to ensure that data archiving and destruction are managed consistently across all environments.

Consistent data quality management across the data lifecycle is critically important in cloud, multi-cloud, and hybrid-cloud environments. An effective data lifecycle management framework enables the consistent and uniform use of tooling across these environments. For example, it should be possible to execute the same data quality rule and generate consistent results regardless of whether the data is at rest or in motion across various environments. The uniform tooling enhances the ability to consistently implement distributed data quality services and rules and integrate outputs into a common repository.

An effective data lifecycle management framework also enables transparency and traceability of data throughout its lifecycle. Metrics can be established in lineage views of data flows across multiple environments, improving the ability to discover the sources of data quality issues by exposing the points in processes at which data quality deterioration is occurring. Cloud-based data lifecycle management framework solutions offer the opportunity for a move to nearly instantaneously alerting on data quality rule failures, enabling rapid diagnosis of issues, root cause analysis and remediation.

Value Proposition

Establishing an effective data lifecycle management framework enables an organization to apply proper data management best practices throughout the lifecycle. Data needs to be properly protected and utilized while maintaining data integrity and quality from capture to use. By deploying a data lifecycle management framework, an organization can combine the best data management practices with the features and functionality of cloud computing to deliver secure and trusted data to their end-users.

An effective data lifecycle management framework will:

- Enable better oversight of data through all stages of its lifecycle, ensuring better controls, protection, and appropriate uses of data.

- Enable the use of advanced artificial intelligence and machine learning techniques for detecting data quality, data integrity and other issues throughout the data lifecycle.

- Enable dynamic sizing of processing capacity at all lifecycle stages, providing better on-demand capabilities for high data workloads and avoiding significant capital expenditure on dedicated infrastructure.

Organizations can automate data lifecycle management processes using metadata-driven rules:

Core Questions

- Has a comprehensive data lifecycle management framework been defined and approved?

- Has the data lifecycle management framework been implemented?

- Is data mapped to an appropriate retention schedule?

- Are data quality rules and measurements being managed according to an agreed standard?

- Are processes for the design of data quality outputs defined?

- Do data quality issue management policy, standards and procedures apply across on-premises and cloud environments?

Core Artifacts

- Data Lifecycle Management Framework

- Data Management Policy, Standard and Procedure – defining and operationalizing data lifecycle management

- Data Retention Schedule Specification

- Data Quality Rules Standard

- Data Quality Measurement Process

- Data Quality Rules Design Process

- Data Management Policy, Standard and Procedure – defining and operationalizing data quality issue management

5.1 The Data Lifecycle is Planned and Managed

Effective management of data throughout its lifecycle requires a Data Lifecycle Management framework to be defined and enshrined in policies, standards and procedures. The lifecycle must then be managed for all data assets, whether on-premises or in cloud environments.

5.1.1 A data lifecycle management framework is defined

Description

Ensuring that data is properly managed throughout its lifecycle is a strategic imperative for any digital organization. A well-designed data lifecycle management framework ensures that the most useful and recent data is readily accessible while delivering storage cost-efficiency. Framework design must also include considerations for information security and privacy to ensure compliance with regulatory requirements.

Objectives

- Gain approval on the taxonomy of stages of the data lifecycle to be adopted by the organization.

- Specify the metadata necessary to support automation of data lifecycle management and controls.

- Define policies for storage tiering as data progresses through the stages of the lifecycle.

- Define a policy and standards for data placement, retention and disposal.

- Ensure the retention and disposal policy addresses lifecycle exceptions.

- Define standards for the secure disposal of data from storage media such that data is not recoverable by any reasonable forensic means.

Advice for Data Practitioners

The data lifecycle management framework must be defined and documented in policies, standards and procedures with the approval of all key stakeholders. Data management policies, standards and procedures must support the data lifecycle management framework for data hosted on-premises and in cloud environments.

The data lifecycle management framework should address the various requirements that pertain to data domains, data sensitivity, legal ownership and location. Metadata for each dimension should be captured in the data catalog (refer toCDMC 2.1 Data Catalogs are Implemented, Used, and Interoperable). This metadata can support the automation of controls that enforce the policies and ensure compliance with applicable laws and regulations. Legal ownership and data sovereignty requirements will influence how backup, archiving, access, retrieval and disposal are designed, supported and implemented.

Cloud environments offer different storage tiers and policy-driven placement, presenting cost-saving and automation opportunities. Storage-tiering policies and rules will typically be based on metadata such as age, last modified date, last accessed date, lifecycle status and data domain. Such policies can deliver cost savings, but the policies should also ensure the satisfaction of business requirements such as availability, resiliency, speed of access and retrieval and retention (in alignment with the master retention schedule of the organization. Policies should also maintain compliance with applicable laws and regulations.

The data lifecycle management framework should address any deviation from a typical lifecycle that may be in practice by the organization. Any departmental exceptions that need to be addressed in the policy and standards should consider the required response to events. Examples of such events include e-discovery requests, legal hold instructions and right-to-be-forgotten requests.

Advice for Cloud Service and technology providers

Since various organizations are likely to define different stages in their data lifecycle, cloud service providers should offer the flexibility for the organization to specifically define its data lifecycle stages and choose technology services that will adequately support its particular requirements. The organization will want the data lifecycle to be managed consistently across all environments. Policy-driven data placement rules should operate effectively across multiple cloud environments.

Questions

- Has organization approval been achieved on the data lifecycle stages taxonomy?

- Has the necessary metadata been specified to support automation of lifecycle management and controls?

- Have policy and standards been defined for the use of storage tiering as data progresses through its lifecycle?

- Have policies and standards been defined for data placement, retention and disposal to ensure that data is stored, accessed, archived and disposed of in compliance with applicable rules and regulations?

- Do the policy and standards address lifecycle exceptions?

- Have standards for the secure disposal of data been defined?

Artifacts

- Data Management Policy, Standards and Procedures – defining and operationalizing data lifecycle management, including specification of a standard taxonomy of lifecycle stages and addressing the use of storage-tiering

- Data Management Policy, Standards and Procedures – defining and operationalizing data placement, retention and disposal, addressing compliance with applicable rules and regulations and including coverage of lifecycle exceptions and secure disposal of data

- Data Catalog Report – evidencing the metadata required for data lifecycle management

Scoring

Not Initiated

No formal Data Lifecycle Management framework exists.

Conceptual

No formal Data Lifecycle Management framework exists, but the need is recognized, and the development is being discussed.

Developmental

A formal Data Lifecycle Management framework is being developed.

Defined

A formal Data Lifecycle Management framework is defined and validated by stakeholders.

Achieved

A formal Data Lifecycle Management framework is established and adopted by the organization.

Enhanced

A formal Data Lifecycle Management framework is established as part of business-as-usual practice with continuous improvement.

5.1.2 The data lifecycle is implemented and managed

Description

All data assets must be managed throughout the entire data management lifecycle for data on-premises or in a cloud environment. Managing data in a cloud environment offers opportunities for metadata-driven automation of the data lifecycle management processes— especially for data retention, archiving, disposal and destruction.

Objectives

- Implement data lifecycle management processes, procedures, controls, and roles and responsibilities that cover both on-premises and cloud environments.

- Ensure that all data assets are mapped to an appropriate retention schedule and the applicable stages in the data lifecycle.

- Ensure the minimum metadata required for data lifecycle management is collected to comply with applicable laws, regulations, contracts and internal policies.

- Implement and demonstrate the effectiveness of controls to respond to e-discovery requests in a timely manner.

- Implement metadata-driven systems and processes in which data is disposed of, removed from operational use or destroyed (not recoverable by any forensic means).

Advice for Data Practitioners

The data lifecycle management framework describes the typical phases through which data moves in an organization. This movement begins with creation or acquisition and continues through processing, maintenance, archiving according to business need and disposal. The framework also describes the different applications and use-cases for data lifecycle management, including records management.

The figure below depicts a typical data lifecycle.

Generally, data processing at each phase involves:

- Creation / Acquisition– new data is proposed, created or received by an organization.

- Processing – data is extracted from internal and external sources. Data quality rules and standards are applied, and appropriate data remediation is put into effect.

- Persistence– data is cataloged with metadata, described using a standard dictionary or taxonomy, and mapped to an appropriate retention schedule.

- Maintenance– data is maintained according to defined processes related to defined rules and dimensions such as quality, timeliness, accuracy.

- Distribution– data is distributed according to defined methods and services for the controlled data access (data-as-a-service).

- Consumption– data is accessible and retrievable in a secure and timely manner— according to business requirements. Data may be moved to alternative storage tiers to reduce cost and increase operational capacity as data becomes obsolete.

- Archiving– data retained for legal and regulatory purposes may be moved to an archive environment. The aim is to reduce costs while maintaining compliance with access and retrieval requirements.

- Disposal– data is deleted entirely and removed from operational access. While such data may be technically recoverable, it is no longer accessible to users or data consumers.

- Destruction– data is permanently destroyed such that it is no longer recoverable by any reasonable forensic means.

Data governance occurs throughout the entire lifecycle, and the governance is typically codified in data policies and standards. At any stage of the lifecycle, data may be of specific interest to regulators and litigators. Consequently, the data lifecycle management framework, processes and systems must provide reliable methods for cataloging and protecting data from deletion until all legal holds or preservation orders are removed.

An organization should define rules, processes and controls to efficiently manage multiple document versions— both structured and unstructured documents. Rules should exist to ensure that earlier versions of data are treated with the same protections as the current version. When practicable, earlier versions of documents that do not need to be retained should be automatically deleted.

Data retention requirements vary according to data type. The taxonomy for data types and the corresponding retention schedules should be defined for the entire organization and consistently applied to all divisions and departments. Data practitioners should establish processes to review and validate data cataloging (with relevant metadata), searching, access and retrieval practices. The aim is to ensure that all data, including archived data, is readily locatable and accessible. Archived data should be anonymized and available in a format that renders the data accurately.

Practitioners should develop and implement an archiving solution that meets the organization's requirements while remaining compliant with applicable laws and regulations. An example is a region-specific requirement such as Write Once Read Many (SEC Rule 17a-4). If data must remain available after its retention period, practitioners should ensure all actions are taken to protect against the inappropriate or incorrect use of the data. For example, if data needs to be retained beyond its retention period for analytics purposes, it must not be possible to link it to an individual. Refer toCDMC 4.1 Data is Secured and Controls are Evidenced.

Practitioners should implement automatic, policy-driven tiering that aligns with the data lifecycle while satisfying the organization's data requirements and complying with applicable laws and regulations. Seek to reduce costs by optimizing the use of storage tiers. Tiers vary by many factors, including cost, location, availability, resiliency, speed of access and retrieval, and minimum storage durations.

Metadata may be required about location and legal ownership and establishing/enforcing clear access and transfer rules (backup, archiving, access, retrieval, legal hold and disposal decisions will be sensitive to legal ownership and location). Classification of data may influence storage decisions and controls of data at various stages of the data lifecycle.

Consider segregating newer data assets from older data assets—which may not have enough metadata for identification. Older data assets are often kept beyond required retention periods. It is best to develop a risk-based methodology that makes disposal decisions with the best available data. Practitioners should avoid migrating data assets to cloud storage if that data lacks the minimum metadata. Before data assets are moved to a cloud environment, they should be reviewed and classified. The data should be disposed of if it is over-retained.

The training curriculum for the organization should include cloud environment user training. All cloud computing roles should be identified, and this personnel needs to understand governance, general architecture, and the procedures for disposal or amendment of holds and retention schedules.

Advice for Cloud Service and Technology Providers

Generally, the organization owns the data, while the provider is responsible for adhering to regulatory requests. The provider's responsibility is typically done on a best-endeavors basis, subject to encryption/ technical access. In particular, the provider must provide the necessary mechanisms and functionality to manage data throughout the entire lifecycle. Business engagement agreements typically contain contract terms of shared responsibility for the custody of data in the context of any necessary legal compliance actions.

The provider should provide the methods for associating the minimum set of mandatory metadata with data and records. Policy-driven rules must directly relate to metadata such as age, last modified date, last accessed data, lifecycle status, and other metadata stored in the data catalog. Providers should offer flexible metadata APIs to access metadata associated with data retention schedules and exceptions to those schedules driven by business events, such as the need to apply legal holds.

The provider must ensure that a workflow for disposal or amendment of legal holds and retention schedules is transparent and granular enough to provide an audit trail for a decision. Functionality should also enable an organization to receive alerts for key events like the end of a customer relationship or application legal hold. Such events may affect the retention period, lifecycle status, or data tiering. In addition, the provider should consider providing auditable proof of data movement, retention, and disposal decisions.

The tiering of data and records should be policy-driven and automated, and providers should offer services that support tiering. Many providers do offer automatic archiving, and disposal can significantly reduce effort and costs. Another common offering is automatic switching to larger storage tiers, which can help control costs as an organization increases its data volumes.

Providers should ensure that any data destruction task is complete, such that the data is no longer recoverable by any reasonable forensic means. Note that this is distinct from data disposition, in which data has moved into the retention staging and is sent for archiving.

Each cloud service provider should provide training that explains the services that support automatic data retention and disposal and the functionality for managing exceptions to retention schedules.

Questions

- Do data lifecycle management processes, procedures, controls, roles and responsibilities cover both on-premises and cloud environments?

- Is the lifecycle stage of each data asset recorded and maintained?

- Has each data asset been mapped to an appropriate retention schedule?

- Has the metadata required by data lifecycle management been collected?

- Can e-discovery requests be responded to in a timely manner?

- Is data archiving and destruction automated and driven by metadata?

Artifacts

- Data Lifecycle Management Procedures and Controls – with defined roles and responsibilities, applying to both on-premises and cloud environments

- Data Catalog Report – demonstrating recording of data lifecycle stage of data assets and capture of required DLM metadata, and mapping data assets to retention schedules

- e-Discovery Request Logs – demonstrating timeliness of response

- Data Archiving / Destruction Logs – demonstrating metadata-driven execution

Scoring

Not Initiated

The data lifecycle is not formally implemented and managed.

Conceptual

The data lifecycle is not implemented and managed formally, but the need is recognized, and the development is being discussed.

Developmental

Formal implementation and management of the data lifecycle are being planned.

Defined

Formal implementation and management of the data lifecycle are defined and validated by stakeholders.

Achieved

Formal management of the data lifecycle is established and adopted by the organization.

Enhanced

Formal management of the data lifecycle is established as part of business-as-usual practice with continuous improvement.

5.2 Data Quality is Managed

Management of data quality starts with the management of data quality rules. These rules must then be deployed and executed to operationalize data quality measurement. Data quality metrics that result from this measurement must be reported and made available to owners, data producers and data consumers. Processes must be established to manage the reporting, tracking and resolution of data quality issues that are identified.

5.2.1 Data quality rules are managed

Description

Data quality rules management includes rules governance to control how rules are put into place, rules lifecycle management to handle creation, maintenance and retirement of data quality rules, and rules change management and auditability to ensure that rule-based decisions can be properly understood retroactively.

Objectives

- Establish and enforce standards for data quality rules.

- Define processes, roles and responsibilities for the creation, review and deployment of data quality rules.

- Specify and approve the lifecycle states for data quality rules.

- Define processes for the management of transitions between lifecycle states.

- Implement regular reviews of data quality rules.

- Ensure that changes to data quality rules can be audited.

Advice for Data Practitioners

Data quality rules are the cornerstone of Data Quality Management, formalizing the requirements against which data quality will be assessed. As the access to and use of data increases in cloud environments, so will the number of stakeholders involved in defining and executing data quality rules. The additional stakeholder involvement increases the importance of effective rules management to ensure they can be applied consistently across multiple clouds and on-premises environments.

Data quality rules management covers rules definition standards, rules governance, rules lifecycle management and rules change management and auditability:

- Rules definition standards underpin consistency in the data quality rule definition, for example, by standardizing the categorization or rules according to core dimensions of data quality.

- Rules governance defines the processes, roles and responsibilities for how rules are be created, reviewed and deployed.

- Rules lifecycle management defines the states that rules go through and how the transitions between those states are managed.

- Rules change management and auditability define how and when rules need to be changed and how those changes are tracked for later auditing.

Data quality rules encapsulate the expectations of data stakeholders. The specification of standards for data quality rules supported by policy for their adoption will provide consistency across the many data quality rules in an organization. The standard should ensure that data quality rules are easy to understand and explain and that they can be implemented. It should specify how rules should link to data assets and the definitions in the data catalog. It should also specify where the rules will be cataloged and how they will be cross-referenced to the applicable data.

Organizations should adopt a standard set of types or dimensions of data quality rules. For example, the EDM Council Data Management Business Glossary includes definitions of seven data quality dimensions. The relevance of each dimension should be considered for each data element for which rules are being specified. The appropriate number of data quality rules may depend on the business criticality of the asset. Data with higher business criticality will require greater coverage of rule dimensions.

Validation of a rule’s adherence to the data quality rule standard is one aspect of rules governance. Automation of this validation should be considered. Responsibilities for creating rules and for their subsequent review, approval and deployment must be clearly defined. The processes for these activities should be standardized. However, organizations should consider applying different levels of governance depending on the business criticality of the data.

Data quality rules should be reviewed periodically to ensure they remain relevant. Reviews may result in decisions to update or decommission rules. The decommissioning must follow the change management processes.

As the volume and application of data quality rules increases in an organization, the need for clarity on the status of any particular rule becomes increasingly important. Lifecycle states should be defined that indicate a rule’s progression from drafting, through approval to implementation and potentially to retirement. The rule governance processes and responsibilities should reference transitions between states. The organization should consider requirements to track and manage the state of groups of rules.

The results of executing data quality rules will be used to drive business decisions, particularly whether data is fit-for-purpose. The data quality rules should be treated as assets with version history maintained to enable auditability from decisions back to the rules on which they were founded. Rule creation and change management should encompass rule description, version control, change approval and deployment process, and should align with the organization's change management standards.

The data quality rule standard itself should be included in the scope of governance and change management.

Advice for Cloud Service and Technology Providers

Any data quality tool should prove the ability to define and manage rules across multiple environments, whether cloud or on-premises.

Data quality cloud service and technology providers can facilitate consistent definition and implementation of data quality rules by providing access to metadata on all rules stored in or implemented by their products or services.

Data quality cloud service and technology providers should offer functionality such as workflow integration and feedback capture to support an organization’s data quality rule governance, lifecycle management and change management processes.

Data quality cloud service and technology providers should enable automated validation of data quality rules against an organization’s standards for those rules.

Questions

- Has a standard for data quality rules been defined?

- Does the standard include a categorization scheme for data quality dimensions?

- Have processes, roles, and responsibilities been defined to create, review, and deploy data quality rules?

- Have the lifecycle states for data quality rules been defined and approved?

- Do standard processes exist for the management of transitions of data quality rules between lifecycle states?

- Have regular reviews of data quality rules been implemented?

- Are changes to data quality rules recorded and auditable?

Artifacts

- Data Quality Rule Standard – including a categorization scheme for data quality dimensions

- Data Quality Rule Governance Procedures – with defined roles and responsibilities for the creation, review and deployment of data quality rules

- Data Quality Rule Lifecycle Management Processes – referencing standard lifecycle states and addressing the management of transitions of data quality rules between lifecycle states

- Data Quality Rule Status Report – generated from rules repository and including date of the last review

- Data Quality Rule Change Management Log

Scoring

Not Initiated

No formal data quality rules management exists.

Conceptual

No formal data quality rules management exists, but the need is recognized, and the development is being discussed.

Developmental

Formal data quality rules management is being developed.

Defined

Formal data quality rules management has been defined and validated by stakeholders.

Achieved

Formal data quality rules management is established and adopted by the organization.

Enhanced

Formal data quality rules management is established as part of business-as-usual practice with continuous improvement.

5.2.2 Data quality is measured

Description

Data quality measurement is the ability to capture metrics generated by executing the data quality rules established inCDMC 5.2.1 Data quality rules are managed.

Objectives

- Define standard processes for data quality measurement that provide consistency across cloud and on-premises environments.

- Generate data quality metrics that align and integrate with relevant metadata. The metrics and corresponding measures should be transparent, traceable and auditable.

- Execute data quality processes in a timely, accurate and consistent manner throughout the data lifecycle.

- Implement regular reviews of the scalability and efficiency of data quality measurement processing.

- Seek guidance from data owners to clearly define and communicate data quality roles and responsibilities to appropriate stakeholders.

Advice for Data Practitioners

Throughout many industries, there continues to be an increase in the volume of cloud data storage, the number of data quality rules that interact with this data and the number of data consumers accessing this data. It is critically important that all stakeholders interested in cloud data storage receive accurate and timely data quality assessments within their cloud environments. Critically, these assessments depend upon the establishment and operationalization of a data quality measurement program.

Data exists primarily in two states—data-at-rest and data-in-motion. This sub-capability advocates frequent capture of data quality measurements—both from data-at-rest and data-in-motion.

Examining data-at-rest

Practitioners should periodically examine data-at-rest to ensure that any data changes data are compliant with all applicable data quality rules of the organization. Perform data-at-rest analysis and measurement in a non-blocking way, such that operational dependencies on the data are not compromised. When the analysis is complete, perform routine data remediation following data quality rules and established metrics. A careful approach will ensure data-at-rest is of the highest quality. Refer to CDMC 5.2.4 Data quality issues are managed.

Examining data-in-motion

Data quality measurements for data-in-motion typically run within a data production process and may sometimes be performed in a blocking way. Data quality measurement outputs may be intentionally configured to prevent recent data products from being published. It is important to realize that the tight coupling between data production and measurement processes may limit the flexibility and scalability of data quality controls.

For either published data-at-rest and data-in-motion, end-users should retain the option of either consuming or refusing to use the published data acquired. The choice of a user would depend on specific data quality limits and threshold requirements.

Take care to explicitly define all the data quality control points at which measurements will be taken. The data producer and data consumer are both accountable for ensuring data quality. Place control points near the data source and data consumption to address these tradeoffs:

- efficiency in identifying issues and reducing the negative consequences of data that is not fit-for-purpose (achieved by early measurement)

- accuracyof the measurement outputs to provide value for data consumers—typically achieved by measurements downstream in the data processing pipeline

- data latencycaused by the additional processing time necessary for data measurement in the synchronous mode (early measurement is likely to hold up more data when failures are detected)

Measuring other types of data

When capturing data quality measurements, consider all forms of data that require monitoring—including semi-structured and unstructured data. Also, take care to perform data quality monitoring that is most applicable to the data under examination.

Operational metadata for data quality measurement should support:

- Tracking the comprehensiveness of measurement coverage for data assets against established standards and policies.

- Monitoring the data quality measurement process and costs.

- Visibility into the operational status of data quality measurement (examples of status include not started, initiated, in progress and comprehensive)

- Traceability from data quality rules and data quality measurements through to data quality outputs.

Balancing centralization and federation in measuring data quality

A best-practice data quality measurement model balances both centralized and federated data quality. A central team can provide a data quality measurement service to all data domains. Measurement standards, tooling and outputs would be provided centrally. Each data domain supplies data quality rules, exposes data for measurement, and performs actions according to measurement outcomes. This approach benefits from higher consistency in execution and adherence to standards, less complexity and lower effort for each domain. The tradeoff with this approach is that it provides less flexibility and control for the data domains.

A central team provides data quality measurement standards and perhaps some tools with the federated data quality model. Data quality measurements and capture of outputs occur locally—in each data domain. This approach may benefit from higher flexibility and level of control for domains, resulting in more overall effort and some risk of divergence among the various data domains.

Other measurement considerations

For environments that exhibit frequently changing data quality rules, measurement should align with the existing governance processes. This alignment promotes consistency, traceability and enables data quality measurement to benefit from the standard capabilities provided by governance mechanisms.

In addition, practitioners should seek to monitor and optimize data quality measurement proactively. Since measurements will need to scale together with an ever-increasing volume of data assets, it is important to ensure that cost and efficiency targets related to data quality measurement remain within acceptable limits.

These are some methods that support such monitoring and optimization:

- Establishing operational metadata and Service Level Agreements around data quality measurements.

- Performing data quality measurements incrementally, wherever possible.

- Ensure the data quality infrastructure can scale as data assets increase in volume.

- Establish SLOs and a production support framework for data quality measurement capabilities.

Advice for Cloud Service and Technology Providers

Cloud platforms and data quality measurement tooling should allow organizations to maintain consistency by deploying data quality measurement processes in heterogeneous and multi-cloud environments. Measurement tooling should exploit elastic cloud infrastructure to scale measurement processes as data volumes increase. Cloud data platforms should provide an option to run data quality measurement processes without extracting any of the data to support efficiency and data security.

Cloud platforms should provide common interfaces for capturing and storing operational metadata that supports data quality measurement and enables a broad range of data consumers to access this metadata. Cloud data platforms typically provide cost-efficient and execution-efficient data validation capabilities by supporting easy identification of new and modified data.

Questions

- Have standard processes for data quality measurement been defined that provide consistency across cloud and on-premises environments?

- Have standards been defined and adopted for the design of data quality measurement control points and processing?

- Have standard processes for data quality measurement been implemented, such that these processes execute in a timely, accurate and consistent manner throughout the data lifecycle?

- Have regular reviews of the scalability and efficiency of data quality measurement processing been implemented?

- Have data quality roles and responsibilities been communicated to the appropriate stakeholders?

Artifacts

- Data Quality Process Documentation – providing consistency across cloud and on-premises environments

- Data Quality Measurement Standard – covering the design of data quality measurement control points and processing

- Data Quality Measurement Review Report – assesses and provides recommendations on the scalability and efficiency of data quality measurement processing

- Data Quality Measurement Operating Model – covering implementation, ongoing support and alignment with data ownership

- Data Quality Measurement Review Report – exhibits the consumption of data catalog metadata to demonstrate coverage and comprehensiveness of data quality measurement

Scoring

Not Initiated

No formal data quality measurement exists.

Conceptual

No formal data quality measurement exists, but the need is recognized, and development is being discussed.

Developmental

Formal data quality measurement is being developed.

Defined

Formal data quality measurement is defined and validated by stakeholders.

Achieved

Formal data quality measurement is established and adopted by the organization.

Enhanced

Formal data quality measurement is established as part of business-as-usual practice with continuous improvement.

5.2.3 Data Quality metrics are reported

Description

Data quality metrics result from the application of data quality rules. Reporting on data quality metrics disseminates information to data stewards, data consumers, data producers, data governance teams and other business stakeholders interested in a particular data domain, category or feed. Such reports may take the form of dashboards, scorecards, interactive reports or system alerts.

Objectives

- Formalize the processes for the design and approval of data quality metrics reports that take data sensitivity and data consumer needs into account.

- Establish guidance for the design of relevant and actionable data quality metrics reports.

- Produce data quality metrics reports that combine measurements from all data quality rules, data assets and control points.

- Ensure that data quality metrics reports can combine time-series data quality measurements supporting the identification and analysis of trends.

- Ensure data quality metrics reports are accessible from the data catalog to support the data quality management process.

Advice for Data Practitioners

In data management, data quality metrics reporting serves two main purposes. Complete identification of good data builds trust and confidence in the data. In addition, identifying defective data informs stakeholders of the need to assess the impact of data quality issues, driving follow-up activities to investigate, prioritize and remediate the issues.

The need for data quality measurement is even more important in a cloud environment since many of the restrictions that are imposed on on-premises systems do not apply in a cloud environment. Many cloud computing environments provide various standard and comprehensive abilities for measuring data quality and immediate alerting of critical issues. Therefore, it is important to design data quality metrics reports being relevant and actionable for all intended recipients.

Many types of stakeholders will make use of data quality metrics reports. However, the importance of ensuring the viability and accountability of the data that corresponds to data quality metrics demands that the data owner is the accountable recipient of the metric reporting. The data owner will solicit input from the other data stakeholders and initiate an issues management process after assessing the impact of the defective data. Refer to CDMC 5.2.4 Data quality issues are managed.

Designing data quality metrics reports

When designing data quality metric reports, there are many considerations. Data quality metrics reporting should enable stakeholders to make informed decisions on whether the data is fit-for-purpose. Data owners and stakeholders must consider whether the data is fit-for-purpose when defining data quality metrics and deciding which will be reported. For example, some use cases may require high-quality data (such as customer billing and credit-risk modeling). In contrast, other use cases may tolerate data omissions or errors (such as marketing communications). A common approach for convenient aggregation of various use cases is to use a data quality scorecard. It should be possible to view individual metrics, aggregated metrics and an overall metric for each data domain. Data quality metrics should be available in the data catalog.

Information in data quality metrics reports should be actionable and informative. Provide summaries of data quality issues and the status—open, pending investigation or closed/resolved. Avoid excessive amounts of extraneous information. Highlight data quality defects that correspond to standard data quality dimensions such as completeness, conformity and valid values. Provide audit information and any necessary technical metrics such as the number of records transferred any data transmission failures.

To facilitate issue management, consider implementing visualizations that provide drill-down capability into the details of defective data. Data metrics can be captured and presented at a data element level, across elements and data sets but should only include relevant metrics for those who access the reports. Use visualization techniques such as trends, summaries, ranges and colored ratings to help users locate and understand the information. Also, organize data quality metrics using groups, aggregations, categories, business units, departments, geographies, and product lines.

Where sensible, implement trend analysis to indicate changes to data quality and support issue management and resolution. Time-series measurements can show progress in resolving data quality issues, so it is important to choose sensible tracking periods (daily, weekly, and monthly).

To see the impact of real-time adjustments, users must have the ability to refresh data quality metrics in the dashboards and reports. Provide the ability for each stakeholder to subscribe to specific categories of data quality metrics. Consider integrations with alerting and issue management systems to communicate data quality issues as they arise. Also, consider progress reporting as data quality issues are resolved.

Advice for Cloud Service and Technology Providers

Comprehensive data quality metrics reporting depends upon collating information from multiple sources. Cloud service and technology providers must offer open APIs that provide the ability to centralize data quality measurements. Providers should provide standard operational reports, including data volume and transmission details, failure information, and data sampling statistics. Data-pull and data-push subscriptions should also be available to organizations to satisfy different data consumer requirements. In addition, some organizations depend upon frequent information updates, so it is important to have the ability to update data quality metrics reporting as often as possible.

Provide standard formats for both metadata and data quality metrics. Employ standard data models for information exchange and integration to support ease-of-use and combining metrics for reports. Also, provide readily understood visualizations and interactive dashboards to improve data quality metrics reporting effectiveness for business users and data stakeholders.

Questions

- Have the processes for design and approval of data quality metrics reporting been formalized, and do they account for information sensitivity classifications and data consumer needs?

- Is guidance available on the design of relevant and actionable data quality metric reports?

- Are data quality metrics reports available, and do they present results from all data quality rules, data assets and control points?

- Do the data quality metrics reports provide flexibility in combining time-series data quality measurements?

- Are the data quality metrics reports accessible for the support of the data quality management process?

Artifacts

- Data Quality Metrics Reporting Process Document

- Data Quality Metrics Reports Design Guidance Document

- Data Quality Metrics Report Catalog – including a description and location of each report along with descriptions of time-series data quality measurements in each applicable report

Scoring

Not Initiated

No formal data quality metrics reporting exists.

Conceptual

No formal data quality metrics reporting process exists, but the need is recognized, and the development is being discussed.

Developmental

Formal data quality metrics reporting process is being developed.

Defined

Formal data quality metrics reporting is defined and validated by stakeholders.

Achieved

Formal data quality metrics reporting is established and adopted by the organization.

Enhanced

Formal data quality metrics reporting process is established as part of business-as-usual practice with continuous improvement.

5.2.4 Data Quality Issues are managed

Description

Data quality issue management entails identifying, categorizing, handling, and reporting data quality issues arising from manual or automatic data quality measurements. An organization's data quality issue management policy, standards, and processes must have consistent application in all on-premises and cloud environments.

Objectives

- Gain approval and adopt data quality issue management policy, standards and procedures that apply consistently across on-premises and cloud environments.

- Provide integrated data quality issue reporting for all on-premises and cloud environments.

- Ensure data quality issues link directly to specific data assets and the relevant metadata in the data catalog.

- Establish metrics that provide evidence for sufficient coverage and effectiveness of data quality issue management.

Advice for Data Practitioners

Increasing the degree of automation for a data quality issue management process typically drives an increase in efficiency. Since data quality issue management impacts much of an organization, seek to standardize and automate wherever practicable. Data practitioners should integrate the data quality issue management processes into the organization-wide issue management processes. The visibility and routines of organization-wide issue management activities will attract valuable stakeholder attention and marshal resources to urgent data quality tasks. In addition, practitioners should establish transparent issue management workflows that are visible to the entire organization.

Practitioners can use the values of the data quality measurements to shape a risk-based prioritization of any data quality issue. Refer to CDMC 5.2.3 Data quality metrics are reported. Practitioners should develop closure criteria for each auditable data quality issue to ensure a complete response that demonstrates how each issue is identified, how the ownership was allocated, and how the issue was prioritized, resolved, mitigated or accepted with documented risk.

Many data quality issues are manageable as part of a larger problem. Practitioners should establish processes for automating the identification of common root causes across multiple issues. These processes will support the ability to prioritize more effectively and allocate resources to the highest priority issues.

It is good practice to annotate data assets with descriptive metadata upstream in a data processing pipeline. Such metadata would minimally include reference data, data inventory and lineage. Data quality issues can be automatically attributed to relevant data owners, data producers, and data consumers with some additional configuration. Practitioners will benefit substantially by examining the data catalog to identify the responsible data owner and assigning accountability for specific data quality issues. This step minimizes manual triage and supports issue ownership allocation against the root cause rather than at the point of discovery.

Data practitioners should also generate reports that compile the stakeholder accountability matrix of the organization. This matrix should include the extent of accountability for business process owners, technology platform owners, data stewards and data owners.

Advice for Cloud Service and Technology Providers

A key driver of complexity in data quality issue management is the potential variety of participants involved at different steps in the process. Some of these roles are the issue identifier, the data owner, business owners, data architects, IT personnel and various organizational stakeholders affected by data quality issues. Other participants include members of the project management office, who will need to allocate funding for issue resolution.

Cloud service and technology providers (CSPs) should provide abilities for issue ownership identification and assignment capability. In addition, CSPs should provide workflow automation that facilitates active monitoring and alerts for data quality issue management.

CSPs should provide capabilities for integrating metadata for data quality issues into the general data domain management feature set. A cloud computing facility for managing such metadata provides enhanced reporting for managing high-criticality issues. Such a facility supports risk-based prioritization of a data quality issue based on the impact on relevant business processes. This prioritization method increases confidence in the integrity of those processes.

To provide a broad foundation for reporting on the impact of a data quality issue, CSPs should ensure that metadata for data quality issues is linkable to other metadata in the data catalog. For example, a data practitioner may want to identify all downstream process impacts that might result from a data quality issue. This impact identification is most easily made by enumerating all the impacted platforms, data structures, data elements and business processes. Since this requires viewing the entire data lineage and relevant metadata, CSPs should provide this ability for all cloud-based data.

Questions

- Have data quality issue management policy, standard and procedures been approved and adopted, and have they been applied across on-premises and cloud environments?

- Is data quality issue reporting integrated across on-premises and cloud environments?

- Do all data quality issues link to metadata in the data catalog?

- Are metrics in place that provide evidence for sufficient coverage and effectiveness of data quality issue management?

Artifacts

- Data Management Policy, Standards and Process Documents – defining and operationalizing data quality issue management that covers both on-premises and cloud environments

- Data Quality Issue Reports – presenting the integration of issues from both on-premises and cloud environments

- Data Catalog – including metadata that links data quality issues to data in the catalog

- Data Quality Issue Management Metrics Report – that provides evidence of issue management coverage and effectiveness

Scoring

Not Initiated

No formal data quality issue management exists.

Conceptual

No formal data quality issue management exists, but the need is recognized, and the development is being discussed.

Developmental

Formal data quality issue management is being developed.

Defined

Formal data quality issue management is defined and validated by stakeholders.

Achieved

Formal data quality issue management is established and adopted by the organization.

Enhanced

Formal data quality issue management is established as part of business-as-usual practice with continuous improvement.

5.3 Data Lifecycle – Key Controls

The following Key Controls align with the capabilities in the Data Lifecycle component:

- Control 11 – Data Quality Metrics

- Control 12 – Data Retention, Archiving and Purging

Each control with associated opportunities for automation is described inCDMC 7.0 – Key Controls & Automations.

Key Control 11

| Control 11: Data Retention, Archiving and Purching | |

Component |

5.0 Data Lifecycle |

Capability |

5.1 The Data Lifecycle is Planned and Managed |

| Control Description |

Data Retention, Archiving, and Purging must be managed according to a defined retention schedule. |

| Risks Addressed |

Data is not removed in line with the legislative, regulatory or policy requirements of the organization's environment, leading to increased cost of storage, reputational damage, regulatory fines, and legal action. |

| Drivers / Requirements |

Organizations have a master retention schedule that determines how long data needs to be retained in each jurisdiction it was created based on its classification. |

| Legacy / On-Premises Challenges |

Organizations will have huge repositories of historical data, often retained to support the requirements of potential future audits. Data sets in different jurisdictions will have different retention schedules. It is difficult to comply with these requirements manually since different applicable legal requirements can modify the retention schedule. |

| Automation Opportunities |

|

| Benefits |

Automatically retaining, archiving, or purging data based on its classification and association retention schedule will reduce the manual effort required to perform this function and ensure policy compliance. |

| Summary |

Organizations with this automation and control can provide the necessary evidence to verify that their data is being retained, archived or purged based on the retention schedule of its classification. |

Key Control 12

| Control 12: Data Quality Measurement | |

Component |

5.0 Data Lifecycle |

Capability |

5.2 Data Quality is Managed |

| Control Description |

Data Quality Measurement must be enabled for sensitive data with metrics distributed when available. |

| Risks Addressed |

Data is not consistently fit for the organization's purposes, resulting in the inability to provide expected customer service, process breaks, the inability to demonstrate risk management, inefficiencies, and a lack of trust in the data and decisions based on flawed information. |

| Drivers / Requirements |

Data quality metrics will enable data owners and data consumers to determine if data is fit-for-purpose. That information needs to be visible to both owners and data consumers. |

| Legacy / On-Premises Challenges |

The limited application of data quality management in many legacy environments results in a lack of transparency on the quality of data and an inability for data consumers to determine if its fit-for-purpose. Data owners may not be aware of data quality issues. |

| Automation Opportunities |

|

| Benefits |

Data consumers can determine if data is fit-for-purpose. Data owners are aware of data quality issues and can drive their prioritization and remediation. |

| Summary |

Providing clarity on data quality and support to ensure data is fit-for-purpose will help data owners address data quality issues. |