6.0 Data & Technical Architecture

Upper Matter

Introduction

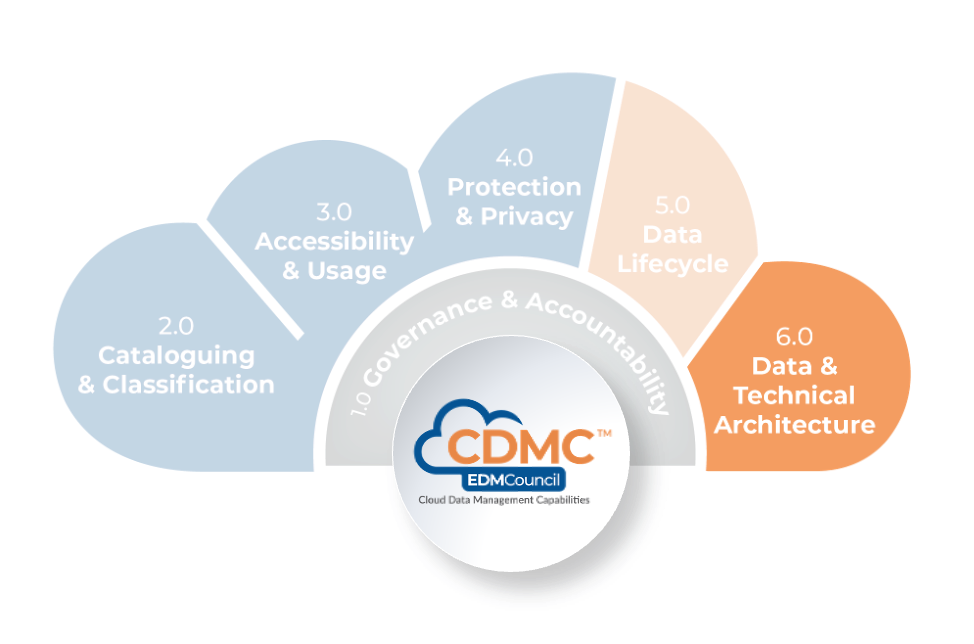

Data and technical architecture address architectural issues unique to cloud computing and affect how data is managed in a cloud environment. With most cloud service providers, there are many options for how business solutions can be designed and implemented in cloud environments, using a variety of cloud services and in how these services are configured and consumed. Developing and adopting specific architecture patterns and guidelines can provide a foundation for best practice data management in cloud environments.

Definition

The Data & Technical Architecture component is a set of capabilities for ensuring that data movement into, out of and within cloud environments is understood and that architectural guidance is provided on key aspects of the design of cloud computing solutions.

Scope

- Establish and apply principles for data availability and resilience.

- Support business requirements for backup and point-in-time recovery of data.

- Facilitate optimization of the usage and associated costs of cloud services.

- Support data portability and the ability to migrate data between cloud service providers.

- Automate identifying data processes and flows within and between cloud environments, capturing metadata to describe data movement as it traverses the data lifecycle.

- Identify, track and manage changes to data lineage, establishing the ability to explain lineage at any point-in-time.

- Provide tools that meaningfully report and visualize lineage—from both a business perspective and a technical perspective.

Overview

Cloud computing introduces capabilities that an organization should include in its data management and architecture best practices. These capabilities allow an organization to adopt leading-edge approaches to data management, such as Data-as-a-Service (DaaS) or data fabrics. However, many organizations may find it best to begin more simply and seek or develop guidance on various aspects of data storage such as speed (storage tier), type (including data stores, object stores and file stores) and geographic location. In any solution design, it will be necessary to balance cost and functionality considerations with consumption-based pricing (based on the volume of data stored and the volume of data ingested or egressing), providing more flexibility than is typically the case in on-premises environments.

A cloud service provider uses APIs for managing data services, typically available for manual and automatic use. Many cloud service providers offer an array of APIs for computation, storage management, data management, scaling, monitoring and reporting capabilities—often well beyond what is typically available in on-premises environments.

There are different types of data recovery options available within most cloud environments. The option chosen will determine the speed at which any recovery can be accomplished. Availability zones, block-based storage replication and other options can enable the organization to exploit various techniques to facilitate recovery based on the criticality of specific data access and application requirements. Dynamic scalability is a key feature of cloud computing and can be used in various ways to enhance data resiliency and availability by separating computational and storage functionality. Multi-cloud environments extend that scalability further. An organization will need to develop guidelines that aim to improve cost efficiency and address the cost of data movement between cloud environments while supporting business data needs for resiliency and availability.

Compared with on-premises environments, tools and services within cloud environments typically enable better discovery of detailed data lineage and provide more detailed, accurate, and up-to-date lineage tracking than on-premise. Consequently, a much greater degree of data lineage detail is available within a cloud environment and enables:

- Validation of data sources.

- Analysis of the impact of change and improved root-cause analysis of data quality issues.

- Detection of duplicate or conflicting data transformations and derivations.

- Detection and assessment of data replication and redundancy.

Migration to cloud computing is an opportunity for an organization to rationalize its data ecosystem and simplify its data lineage. Data movements within and between environments will expand. Cloud computing greatly enhances the ability of an organization to detect and record these movements automatically. The immediate scalability of processing power in a cloud environment enables a level of detail to be captured in data lineage that is rarely feasible within on-premises environments. Automated monitoring reduces the effort necessary to maintain this lineage data.

The capture of data lineage is critical to controlling data in a cloud environment. Understanding the actual source of data and the movement of data from source to consumption provides confidence in the data that is put to use in business processes and analytics. It underpins regulatory compliance, impact analysis, quality troubleshooting and detecting any data duplications. Cloud technology offers organizations significant potential to automate many aspects of data lineage discovery and management.

While data lineage tracking is more readily performed in the cloud, it is important to note that when data flows are moved from an on-premises environment without re-engineering, the lineage may not be discoverable by monitoring services in the cloud environment. In such cases, relevant metadata must be loaded to the cloud data store with the associated data.

Value Proposition

Organizations that establish best practice architecture patterns and guidelines for the adoption of cloud capabilities can confidently maximize the ability to realize value from those capabilities:

- Data management best practices can be engineered into cloud solutions.

- The compute and storage scale of the cloud offers great global availability and resiliency for increased data accessibility and recovery. Scalability also offers greater cost efficiencies.

- Multiple cloud environments reduce the perceived business risk of data access.

- Choices of technical options such as storage tiering, availability zones and replication can match business needs.

- Cost visibility and control can be built into the solution design.

Adopting best practices and standards for data portability provides a basis for exiting or changing cloud services in response to commercial or regulatory drivers.

Organizations that take advantage of the enhanced capabilities for managing data lineage within cloud environments can reduce the costs associated with data lineage and create opportunities to realize business value from well-understood data lineage:

- Costly and error-prone manual activities can be eliminated.

- Detailed point-in-time information can be produced with ease to satisfy regulatory audits.

- The analytics environment can be simplified by automated monitoring of the data ecosystem. The automation minimizes both the need for the analysts to manually research the data sources and the risk that unwanted changes to the provenance of their data will go undetected.

Core Questions

- Has architectural guidance for the design of backup approaches been provided?

- Has architecture guidance and patterns been provided for data processing, use, storage and movement?

- Are there architectural standards on how solutions should provide data transfer and processing to another provider?

- Have architecture patterns been selected and implemented to support business requirements for availability and resilience?

- Have policies and standards been established for backup strategies, planning, implementation and testing?

- Is lineage automatically discovered and recorded across all in-scope environments?

- Have policies and procedures for lineage change management been defined?

- Can lineage be reported for any historic point-in-time?

- Have lineage reporting and visualization requirements been documented and approved?

Core Artifacts

- Architecture Patterns – addressing backups, data processing, use, storage and movement, availability and resilience, and data portability

- Data Management Policy, Standard and Procedures

- Defining and operationalizing data backup

- Defining and operationalizing data lineage change management

- Cloud Provider Exit Plan

- Data Lineage Reports

- Data Lineage Reporting and Visualization Requirements

6.1 Technical Design Principles are Established and Applied

Technical design principles must be established to facilitate the optimization of cloud use and cost efficiency. They must guide the implementation of solutions that meet availability, resilience, back-ups, and point-in-time recovery requirements. The ability to exit cloud services must be planned and tested, facilitated by data portability between cloud and on-premises environments.

6.1.1 Optimization of cloud use and cost efficiency is facilitated

Description

The placement, storage and use of data in a cloud environment offer an organization greater flexibility and capability, which is not typically available in an on-premises environment. However, all activities associated with a cloud environment, including data processing, use, storage and movement, will incur costs. In addition, many decisions must be made for architecture, design and implementation solutions—and these must follow best practices. Simultaneously, design principles and guidelines must be migrated, revised and established to consider all necessary trade-offs between functionality, use and maximizing cost efficiency.

Objectives

- Establish architecture guidelines and patterns for data processing, use, storage and movement—emphasizing automation to drive standardization.

- Provide guidelines on solution design that optimize functionality and cost while sufficiently addressing constraints such as security, integrity and availability.

- Identify and gain approval on the cost drivers that must be addressed in cloud-based solution business requirements, including data retention, availability, and sovereignty.

- Define and capture usage and cost transparency metrics that adequately support management decision-making and ongoing oversight.

Advice for Data Practitioners

Architecture guidance and patterns should be used to capture and formalize best practices for designing cloud solutions. There are many considerations. It is important to begin by matching requirements driven by the sensitivity of the data with the cloud provider features, balancing functionality and cost. Practitioners may need to lead an effort to make decisions on single-cloud, multi-cloud and hybrid-cloud designs; the location of data stores and processing.

Architectural guidance should encourage the decoupling of compute and storage, enabling the ability to scale each independently, supporting both a cost-effective and high-performing solution. It may be necessary to create operational duplicate data stores to meet availability requirements. Wherever practicable, automate and standardize data movements outbound, inbound and within cloud environments.

In addition, data lifecycle management should be driven by organizational policy. Also, use compressed formats to reduce data storage and transfers costs and avoid unintentional or unnecessary processing and data movement.

Practitioners should verify that cloud service and technology providers can support the architecture patterns and provide the major cost contributors and rate information related to any guidance provided. While the selection and management of service providers are beyond the scope of this sub-capability, the ability of providers to optimize usage, efficiency and costs should be factored in the selection of new providers.

Practitioners should also consider how cost optimization will be assessed and managed. Information on actual costs incurred should be used to justify employing existing systems. Implemented designs must continue to be cost-effective and demonstrate ongoing potential for optimization. Measurements to inform this process will depend on well-defined metrics, effective cost management and an effective internal chargeback process that allocates specific costs to the business solutions that incur them.

Advice for Cloud service and technology providers

Cloud service and technology providers should automate data collection and report on cost drivers, including data replication, storage tier, retention period and destruction deadlines. Providers should also offer comprehensive reporting on resource utilization, billing costs for storage, usage, access, and data movements. The organization should be able to act on alerts that trigger on pre-defined thresholds. Examples include:

- alerting on a request to move data from a lower to a higher cost storage tier.

- alerting when an attempt is made to delete data with an incorrect data age.

Such abilities enable an organization to proactively monitor and manage its cloud environment(s) from risk management, cost and business function perspectives.

In addition, providers should give the organization the ability to readily access logging services that give full visibility into all data activity and movements. Ideally, log access APIs should be available with different levels of user access. This API functionality should include uploading, extracting, and accessing the log data. These abilities give the organization the benefits of detail monitoring, analysis and gaining insights on minimizing costs.

Questions

- Have architecture guidelines and patterns been established for data processing, use, storage and movement?

- Do architecture guidelines emphasize automation to drive the adoption of standard patterns?

- Are cost drivers identified and approved that must be addressed in business requirements for cloud-based solutions?

- Have guidelines been established for solution design that optimizes functionality and cost and sufficiently address security, integrity and availability constraints?

- Are usage and cost transparency metrics defined and captured that adequately support management decision-making and ongoing oversight?

Artifacts

- Cloud Architecture Requirements and Guidelines – including advice on automation and adoption of standard patterns

- Cloud Architecture Patterns – including approved designs for data processing, use, storage and movement

- Business Requirements Template – including requirements that affect costs

- Solution Design Guidelines – including guidance on optimizing functionality, cost and constraints such as security, integrity and availability

- Cloud Use and Cost Reports – including metrics defined and captured to support management decision-making and ongoing oversight

Scoring

Not Initiated

Optimization of cloud use and cost efficiency is not facilitated

Conceptual

The need for optimization of cloud use and cost efficiency to be facilitated has been identified, and the facilitation is being discussed

Developmental

Facilitation of cloud use and cost efficiency optimization is being developed

Defined

Facilitation of optimization of cloud use and cost facility is defined and validated by stakeholders.

Achieved

Facilitation of optimization of cloud use and cost efficiency is established and adopted by the organization.

Enhanced

Facilitation of optimization of cloud use and cost efficiency is established as part of business-as-usual practice with continuous improvement.

6.1.2 Principles for data availability and resilience are established and applied

Description

The business requirements for data availability and resiliency in a cloud environment must be documented and approved. These requirements are applied to the cloud architecture design to employ cloud capabilities that support availability, accessibility, replication, and resiliency across the entire architecture.

Objectives

- Define and gain approval of business requirements for data availability and resilience.

- Provide availability and resiliency guidelines for selecting storage and access options available from the cloud service provider.

- Provide guidelines on employing and configuring availability zones to meet requirements for resilience and high availability.

- Ensure each data resource has a corresponding are tagged with their availability and resilience service level agreement (SLA) and service level objective (SLO) and in the data catalog.

- Develop architecture patterns for providing data consistency, availability, and partition tolerance.

- Adopt appropriate architecture patterns in line with business requirements for data availability and resilience.

Advice for Data Practitioners

Typically, an organization relies on data as the cornerstone of its business. Solid cloud architecture and design can significantly improve the outlook for achieving the best possible data availability and resiliency.

A key principle in establishing data availability and data resiliency for data management in a cloud environment is to establish controls that ensure data is available only to authorized users in a controlled manner (adhering to data protection and privacy) to satisfy business requirements. Another key principle in achieving high availability and resiliency is to employ cloud storage capabilities such as storage options, availability zones and optimizing for area considerations. One more key principle is selecting and configuring a cloud storage architecture that meets the organization's various availability and resiliency requirements of all relevant user types.

Fundamentally, the cloud environment architecture pattern must balance data duplication for availability with the costs and consistency implications of that duplication. The architectural pattern should address features such as repeatable results for data loading and provide a restart capability from the last successful point during processing. Architects should be aware of the trade-offs and choices implied by the CAP Theorem, also known as Brewer’s Theorem, which states that only two of the three properties of consistency, availability and partition tolerance can be guaranteed.

It will be necessary to analyze and re-architecture business applications developed for on-premises to fully utilize cloud services’ availability and resilience capabilities. Architecture blueprints and patterns for cloud-native applications will guide this re-architecture.

All stakeholders responsible for implementation in the cloud environment must establish a system of controls to monitor the SLAs and SLOs for the environment. Contractual agreements with cloud service providers should include SLAs for data availability. The SLO is a component of an SLA and enables measuring the service provider’s reliability at guaranteed thresholds defined by the SLA. For availability and resilience, the SLO provides a quantitative document for defining the level of service the organization can expect through metrics such as up-time and network throughput.

As stated in the Upper Matter for this component, data practitioners should take advantage of cloud service providers' architecture training and education resources.

Advice for Cloud Service and Technology Providers

Cloud and technology service providers should provide architectural blueprints to data practitioners, giving detailed information on the various storage types and capabilities that support referencing data availability and resiliency. Providers should communicate guidance on how the cost of common or relevant storage and workloads must be considered when selecting an approach that satisfies the business requirements. Refer toCDMC 6.1.1 Optimization of cloud use and cost efficiency is facilitated.

Providers should also describe how availability zones use replication to support high availability in a cloud implementation. APIs should be available to enable the automation of data availability and resiliency. Lastly, providers should offer playbooks for assessing the suitability of how each architectural pattern could be implemented.

Questions

- Are there approved business requirements for availability and resilience?

- Have guidelines for the selection of storage and access options been documented?

- Has guidance on the use of availability zones been provided?

- Have patterns for consistency, availability and partition tolerance trade-offs been developed?

- Does each data resource have a corresponding availability and resilience service level agreement (SLA) and service level objective (SLO), and is each document accessible in the data catalog?

- Have architecture patterns been selected and implemented to support business requirements for availability and resilience?

Artifacts

- Business Requirements Document – including requirements for data availability and resilience

- Service Level Agreement – including SLOs for data availability and resilience

- Architecture Standards, Patterns and Guidelines – covering the selection of storage and access options, use of availability zones and consistency, availability and partition tolerance trade-offs

- Data Catalog – including cloud service and technology provider SLA / SLO tags

Scoring

Not Initiated

No formal principles for data availability and resilience exist.

Conceptual

No formal principles for data availability and resilience exist, but the need is recognized, and the development is being discussed.

Developmental

Formal principles for data availability and resilience are being developed.

Defined

Formal principles for data availability and resilience are defined and validated by stakeholders.

Achieved

Formal principles for data availability and resilience are established and adopted by the organization.

Enhanced

Formal principles for data availability and resilience are established as part of business-as-usual practice with continuous improvement.

6.1.3 Backups and point-in-time recovery are supported

Description

The ability to perform data backups is critical for disaster recovery and, more general point-in-time data recovery. Backups are copies of data typically stored in a location that is different from the primary data store. Data retrieved from a recent backup is used to restore data and system configuration in a disaster recovery context. In addition, data from an older backup can be used to restore data that had been in use at an earlier time.

Objectives

- Gain approval and adopt backup and recovery strategy, planning, implementation and testing policy, standards and procedures..

- Ensure backup and recovery capabilities support disaster recovery and point-in-time data recovery.

- Ensure backup and recovery standards specify isolation and data residency requirements.

- Ensure backup and recovery standards specify security requirements that align with the data asset classifications in the data catalog.

- Provide architectural guidance for designing backup and recovery approaches.

- Ensure that the backup and recovery plans reflect the standards and guidance.

Advice for Data Practitioners

Cloud operational backups

Data backups are special copies of the contents of data stores. Backups are stored in a different location from the original data. When necessary, data is taken from a backup copy to restore data to a correct state. Backups must be secure and provide reliable recovery mechanisms to ensure that a logical recovery can occur when needed. A solid backup plan ensures that data is readily recoverable with minimal data loss.

Above all, a backup and recovery plan must outline how to recover data quickly and completely. After compiling a recovery plan, it is essential to test it immediately and at regular intervals. When designing a backup plan, it is also important to specify retention periods, backup file capacity requirements and the method for disposing of unnecessary backup files.

Using proprietary formats for data backups may be problematic when attempting to restore data if there is a failure in the proprietary system.

Data archiving

A data archive is a copy of data that is put into long-term storage. The original data may or may not be deleted from the source system after the archive copy is made and stored, though it is common for the archive to be the only copy of the data.

Archiving is different from an operational backup and is typically done to support regulations and legal requirements. Many archiving solutions use simple, generic methods for storing copies of data. The archive copies are independent of the archiving system and the primary data storage system

An archive may have multiple purposes. By maintaining data archives, an organization can maintain an extensive permanent record of historical data. Commonly, a data archive directly supports information retention requirements for an organization. If a dispute or inquiry arises about a business practice, contract, financial transaction, or employee, the records about that subject can be obtained from the archive. Refer toCDMC 5.1 The Data Lifecycle is Planned and Managed.

Backup scope

A basic assumption of an effective backup and recovery plan is that all data becomes inaccessible in the operational environment. The data itself is typically only one aspect of a system that requires recovery. The plan must account for the infrastructure configuration, environment variables, utility scripts and source code and other relevant subsystems that integrate with the main application.

Also, it may be necessary to consider:

- Machine learning and other time-variable modeling are not easily reconstructed from source code, the data, or the model outputs. Therefore, it is essential to make provisions to protect the training data sets.

- If encryption keys protect any data, the loss of these keys will render the data unrecoverable. All data encryption keys should be part of a backup and recovery plan.

Backup resiliency

A backup and recovery process must be resilient against a multitude of possible issues to be effective. Regulators routinely require minimum data backup protections. The air gap is a common implementation for satisfying regulations for protecting data backups.

The OCC asks GSIPs to"Logically segment critical network components and services (e.g., core processing, transaction data, account data, and backups) and, where appropriate, physically air gap critical or highly sensitive elements of the network environment."They go on to highlight backups."securely store system and data backups offsite at separate geographic locations and maintain offline or in a manner that provides for physical or logical segregation from production systems."1

The FFIEC (a coalition of Fed RB, FDIC, NCUA, OCC and CFPB) defines an air gap as:

"An air gap is a security measure that isolates a secure network from unsecured networks physically, electrically, and electromagnetically."

"In accordance with regulatory requirements and FFIEC guidance, financial institutions should consider taking the following steps. Protections such as logical network segmentation, hard backups, air gapping, maintaining an inventory of authorized devices and software, physical segmentation of critical systems, and other controls may mitigate the impact of a cyber attack involving destructive malware…."1

Backup resiliency in the cloud

Historically, many on-premises backup procedures involved taking a data backup, storing it in another onsite location and duplicating a copy of the backup to a storage medium that would be stored offsite. This procedure and movement to segregated storage would meet the air gap's physical, network and electromagnetic isolation requirement. The air gap requirement intends to provide backup isolation and have no single point of failure between the primary data store and backup storage.

When employing cloud computing solutions to support a backup and recovery plan, data practitioners should be aware of the technology options available from the cloud service provider (CSP) to ensure that physical, network, electrical and electromagnetic isolation requirements are met.

It is the responsibility of the data practitioner to understand, configure and verify that CSP solutions meet the requirements in the backup and recovery plan. The data practitioner should provide testing evidence that the backup and recovery plan is readily executable through chosen CSP technologies.

The data practitioner must carefully examine the isolation capabilities of the CSP, and the CSP must provide evidence that its technologies provide backup isolation that meets organization requirements.

There are many other techniques available to the data practitioner to help satisfy air gap requirements.

- Network isolation– Using a separate Virtual Private Cloud (VPC) to isolate operational and public-facing components from backup environments.

- Logical separation – Implementing security and permission schemes with the application environment to support the division of duties and prevent operations from adversely impacting the backup and configuration areas.

- Physical redundancy– Backup replication can be done in the local region or in other availability zones to mitigate localization risks. When practicable, it is best to protect backups from electronic, electromagnetic, and physical risks. These protections should be done with consideration for any residency, sovereignty, or localization requirements.

- Immutable storage Write-Once-Read-Many (WORM) storage devices are useful in mitigating the risks of corruption, deletion, unauthorized modification or unintended alteration of data. WORM storage and other similar storage offerings can address part of the air gap requirement since such technologies are highly impervious to overwrites or deletions.

- Security and encryption of backups– Since much of the data in the operating system must be protected, the backup files and environments must be secure. Though there are performance tradeoffs, it is important to consider the encryption of all backup files.

Backup gold copy

One approach that ensures the backup gold copy status configures backup and recovery to employ the cloud computing environment to write backup files to immutable cloud storage in a secure, segregated network with replication over physical zones. The segregated network would ensure isolation, immutable storage would prevent file corruption, and the redundancy provided by the CSP would protect the backup from electromagnetic or physical risks. Also, backup files can be encrypted and stored with exclusive access rights.

Typically, cloud storage is highly redundant. Many providers offer three or more availability zones, 99.99% availability and extreme durability (> 99.999% recoverability). Using a CSP for backup storage is a strong mitigator of the risks of site failure and localization. Establishing network isolation and implementing highly restrictive access controls prevent accidental corruption and negative effects from malicious software or bad actors.

Planning for point-in-time recovery

Point-in-time recovery gives administrators or users the ability to restore data from a backup. The contents of the operational data will be identical to a specific point in the past. Examples include an accidental drop of a database table, an unintentionally committed update, or a process that maliciously corrupts data in files or systems.

Point-in-time recovery can employ cloud storage replication processes that simplify backup-and-restore processes. Point-in-time recovery can also exploit cloud capabilities such as availability zones or multi-tier storage.

These are common use cases for point-in-time recovery:

- Transaction failure– If a transaction, system write or save fails before completion, the system may not be successful in restoring all data to the correct values, and inconsistent data may result. A point-in-time recovery would be a suitable remedy in such cases.

- Rogue or malicious process– If an unauthorized update, deletion or change results in corrupt data, point-in-time recovery is suitable for restoring a system, subsystem, table, or file to the state before the corruption.

- System failure– To recover from a failure that may have corrupted data at the system level, such as a software release gone bad. Point-in-time recovery is also effective for restoring data that has become broadly corrupt due to a system failure or software update.

- Media failure– A hardware failure is very similar to a system failure, and the recovery plan is nearly identical. A failure is less likely to occur in a highly redundant cloud computing environment.

- Point-in-time for disaster recovery– For many organizations, point-in-time recovery is also used for disaster recovery planning. A common approach is to activate a standby site when a system with no high-availability capability needs to be brought online following a failure.

Planning for recovery point and recovery time objectives (RPO & RTO)

It is good practice to design a backup and recovery plan that accommodates various system criticality. A plan for point-in-time recovery should primarily be driven by a recovery point objective and recovery time objective. In a large organization, practitioners can create patterns or blueprints for systems that share similar RPOs and RTOs.

A recovery point objective is a specific volume of data that an organization identifies as an acceptable loss in a disaster. Replication can provide close to a real-time recovery point and replicate all changes to another location. Systems may not warrant such a strategy. Business and IT demands should shape the recovery point objective and associated plan.

A recovery time objective is equally important as the recovery point objective since it provides the business requirement for a tolerable outage duration. All relevant systems must be operational before the recovery time objective duration elapses. The amount of time to recover a system depends on the recovery method, frequency of backup checkpoints and the volume of data to recover. Recovery time may lengthen with the consumption of storage capacity and decreasing network capacity.

Advice for Cloud Service and Technology Providers

Cloud service and technology providers (CSPs) should ensure that backup and restore utilities are accessible through a console, command line, and API. Backup and restore services should be available through these methods for each type of storage (block, object, and database).

The CSP should explain each of its available storage tiers, including media used and costs. In addition, an organization should be aware of storage accessible in one or more availability zones and regions depending on the organization's needs. If the CSP provides it, the organization should know what options are available for write-once-read-many (WORM) storage and the features that are available with this storage type.

At a minimum, a CSP should provide the ability to encrypt backups and backup files, the ability to create and track multiple versions of backups, the ability to provide visibility and tools for application stack versions and underlying services to aid in the restoration of versions, and the ability to isolate backups from operational systems using virtual networking. Where applicable, present the options that are available for self-managed and managed services for backups.

Evidence that these solutions have been implemented should be readily available and presented in reports that include the network, region and other attributes that show isolation characteristics. Ideally, this evidence should be consistent and accessible for each of the backup solutions.

Questions

- Are there policies and standards for backup strategies, planning, implementation and testing?

- Do backup and recovery capabilities support disaster recovery and for point-in-time data recovery?

- Do the backup and recovery standards specify isolation requirements?

- Do the backup and recovery standards specify security requirements that align with the data asset classifications in the data catalog?

- Is architectural guidance available for designing backup and recovery approaches?

- Do the backup and recovery plans reflect the standards and guidance?

Artifacts

- Data Management Policy, Standards and Procedures – defining and operationalizing data backup

- Covering backup strategies, planning, implementation and testing

- Specifying isolation and data residency requirements

- Specifying security requirements that align with the data asset classifications in the data catalog

- Backup Architecture and Design Guidance – covering both disaster recovery and point-in-time recovery

- Backup and Recovery Plans – reflecting the standards and guidance

Scoring

Not Initiated

No formal support of backups and point-in-time recovery exists.

Conceptual

No formal support of backups and point-in-time recovery exists, but the need is recognized, and the development is being discussed.

Developmental

Formal support of backups and point-in-time recovery is being developed.

Defined

Formal support of backups and point-in-time recovery is defined and validated by stakeholders.

Achieved

Formal support of backups and point-in-time recovery is established and adopted by the organization.

Enhanced

Formal support of back-ups and point-in-time recovery is established as part of business-as-usual practice with continuous improvement.

6.1.4 Portability and exit planning are established

Description

There are several drivers for the need to transfer data from a cloud environment. The transfer may be a planned data movement to a provider that offers new functionality. Data transfers are also a necessary part of an exit from an existing cloud service or technology provider. Another reason for a data transfer may be a regulatory expectation or a necessary response to an internal risk assessment. Whatever the reason, data portability planning and testing are required. Consequently, exit planning and data portability are critical capabilities when designing and implementing a sustainable cloud environment.

Objectives

- Document and approve the requirements of data portability and establish a viable exit plan.

- Perform a risk assessment to highlight degrees of data and data process criticality as input to scoping data portability and exit planning.

- Create architectural standards on how solutions provide data transfer and processing from a cloud provider to an alternative provider or on-premises environment.

- Create, test and gain approval of data portability plans.

- Create, test and gain approval of exit plans for each cloud provider.

Advice for Data Practitioners

For many organizations, the footprint of data and functionality deployed to cloud environments continues to increase. Typically, the importance of data in the cloud becomes more critical to the organization. Eventually, such an organization will look to transfer data to other cloud service providers.

Contractual provisions for data portability

Data practitioners should ensure contractual terms and conditions with cloud service and technology providers, including specific rights for the organization to obtain a copy of its data on demand and delete copies of data held by the provider. It is the responsibility of the data practitioner to ensure full removal and deletion of data from the source data location after completion and verification of data transfer processes.

Data architecture considerations

Data practitioners should enforce architectural standards that provide data transfer and processing to another provider to facilitate data portability and execution of exit plans. At a minimum, this includes the ability to extract all required data from a provider and, if desired, migrate the data elsewhere. The standards may also include measures to enable movement of database and application processing components to a new provider rather than rebuilding those components.

The architectural standards should also ensure that data portability plans are not cost-prohibitive by requiring plans to incorporate detailed assumptions on volumes and associated costs, be regularly reviewed, and be kept up-to-date. The architecture designs should also provide points of interoperability and easily replicable infrastructure with industry-standard APIs.

The standard should also encourage the use of technology capabilities that will enable data portability. It is important to identify any use of proprietary databases or data processing tools that would require reimplementation rather than re-host or port in the event of supplier exit. Consider provider-neutral technologies and services for data transfer instead of depending on provider-specific tools.

In addition, the standards should state cases where data should be stored in a common open format to improve portability and address how consistent snapshots of required data can be exported in bulk for transportation to the new provider.

Data practitioners should enforce complete and consistent data catalog use. Refer to CDMC 2.1 Data Catalogs are Implemented, Used, and Interoperable. Having an accurate inventory of all data and data flows across the data ecosystem will simplify and mitigate the risk of exit planning and execution. Migrating all relevant metadata must also be considered in data migration plans, ensuring accurate and descriptive information is maintained.

Data portability plan considerations

Effective data portability plans that ensure data can be relocated should include provisions for data privacy and security to be maintained throughout the transfer process. Establish an assessment process to identify critical business functions, reducing risk and minimizing the business impact of data portability.

Portability plans should also include considerations for data usage and consent requirements in all affected jurisdictions to ensure legal and regulatory compliance (such as FCA FG 16/5 - Guidance for firms outsourcing to cloud1).

In addition, plans should specify an approach for transfer in and out of various cloud environments. Specify which toolsets will be available to enable a more efficient data migration. List all the extracted data formats, and indicate if data transformation will be necessary before importing data into the target environment.

When practicable, the plans should outline any automations that would increase operational performance and remove the potential for human error.

Exit plan considerations

Data practitioners should establish plans to transfer data to another cloud service provider or back to an on-premises environment. Effective exit plans should include risk assessments to identify relevant risks and prioritize their mitigation. Risks include legal and regulatory risk, concentration risk, the availability of skills and availability of resources.

An exit plan should also include an up-to-date inventory of services and functionality in use across cloud service and technology providers. There should be tools and capabilities such as cloud discovery technologies necessary to execute the exit plan. Also, document reconciliation processes to verify the accuracy and completeness of data moved to the new provider.

Additional considerations include how well the exit plan aligns with business continuity and disaster recovery plans. In addition, document how the alternate cloud service and technology solutions capabilities will support existing business requirements. Finally, it is important to ensure both the portability and exit plans have been fully tested and validated. The validation involves following formal procedures for testing, approval, release, and periodic review, documenting and persisting all test results; and including key stakeholders in test result approvals.

Advice for Cloud Service and Technology Providers

Cloud service and technology providers involved in data transfers should offer capabilities that support the various processes. Most importantly, the provider should offer systems for transparent bulk data transfers while meeting the organization's security and data protection requirements. The provider must also support data identities and entitlements to be exported and imported in bulk to support the migration of access controls between providers. The service contract should stipulate provisions that guarantee visibility into processes and methods used in data destruction, including a confirmation when data is requested for removal.

In addition, the organization should have access to user interfaces, APIs, protocols, and data formats for cloud services, to reduce the complexity of data portability. There should also be the capability to export derived data, such as log records or configuration information.

The provider must also support open technologies (open standards or open-source) for administrative and business interfaces. Common, open interfaces make it easier to support multiple providers simultaneously. One example is the Cloud Data Management Interface (CDMI) standard.

Lastly, providers should use open standard APIs to ensure broadly interoperable data discovery and consumption across multiple environments. Refer toCDMC 2.1 Data Catalogs are Implemented, Used, and Interoperable.This standard is required for structured data, but there is an even greater need for unstructured data to provide transparency of metadata elements within the data catalog to enable planning for data transfer. This transparency will minimize or eliminate the necessity to rebuild metadata between cloud service providers.

Questions

- Have requirements for data portability and exit planning been documented and approved?

- Has a Risk Assessment been performed to highlight degrees of criticality for data and associated processes as input to scoping data portability and exit planning?

- Are there architectural standards on how solutions should provide data transfer and processing from a cloud provider to an alternative provider or on-premises environment?

- Have data portability plans been created, tested and approved?

- Has an exit plan for each provider been created, tested and approved?

Artifacts

- Data Portability and Exit Planning Requirements Document – documented requirements, including business impact analysis and business stakeholder approval

- Architectural Standards – documented and approved architectural standards to support data portability

- Data Portability Plan – documented data portability plan, including associated testing results and appropriate approval(s)

- Exit Plan – documented exit plan, including associated testing results and appropriate approval(s)

Scoring

Not Initiated

No formal data portability and exit plans exist.

Conceptual

No formal data portability and exit plans exist, but the need is recognized, and the development is being discussed.

Developmental

Formal data portability and exit plans are being developed.

Defined

Formal data portability and exit plans are defined and validated by stakeholders.

Achieved

Formal data portability and exit plans are established and adopted by the organization.

Enhanced

Formal data portability and exit plans are established as part of business-as-usual practice with continuous improvement.

6.2 Data Provenance and Lineage are Understood

The data lineage in cloud environments must be captured automatically, and changes to lineage must be tracked and managed. Visualization and reporting of lineage must be implemented to meet the needs of both business and technical users.

6.2.1 Multi-environment lineage discovery is automated

Description

As cloud data storage becomes more important for more organizations, there is increasing demand for automatic, continuous discovery and detection of data lineage. Many cloud environments host very large data volumes, and such environments will benefit from efforts to automate data lineage discovery. Automatic data lineage discovery employs APIs, specialized software and artificial intelligence to locate data assets, identify interdependencies and record data lineage automatically. When practicable, automatic data lineage discovery should be implemented to operate seamlessly across hybrid and multiple cloud environments.

Objectives

- Implement automated functionality that identifies processes that move data.

- Record data lineage metadata for data movement processes that are discovered automatically.

- Ensure lineage auto-discovery identifies processes that move data across jurisdictions, availability zones and physical boundaries.

- Ensure lineage-auto discovery is enabled in hybrid and multiple cloud environments and identifies data movement between those environments.

- Define and implement processes for the review of auto-discovered lineage information.

Advice for Data Practitioners

To achieve automated lineage discovery, data practitioners should exploit cloud services and third-party tool automation capabilities, wherever possible, to identify data process execution within a cloud environment. Data movement processes include ETL, ELT, intentional duplication, data delivery and streaming. The identification should be performed periodically.

Data practitioners should also employ Artificial Intelligence (AI) and Machine Learning (ML) to perform automatic discovery and recording. AI/ML should be used to identify anomalous results in which auto-discovered information may conflict with previously documented lineage—and flag them for review. Existing documentation, previously cataloged metadata, cloud environment logs and application logs can be used as sources for automation efforts and detection of anomalous results.

Data practitioners should take a key step to establish an automated quality assessment process to reconcile automatically discovered data lineage with the current metadata information. Another important step is to provide a written and graphic representation of the automated data lineage discovery process results. Practitioners should ensure that recorded lineage metadata includes all the facets and dimensions necessary to support the reporting and visualization capabilities when implementing automatic lineage discovery. Refer toCDMC 6.2.3 Data lineage reporting and visualization are implemented.

Advice for Cloud Service and Technology Providers

Cloud service and technology providers should provide organizations with tools and capabilities that enable automated multi-environment lineage discovery. One important capability is the creation of processes that automatically discover data lineage within the cloud environment. In addition, the provider should offer access for auto-discovery processes through APIs to infrastructure log information on data placement and application logs on data movement. Logs should not be hidden or abstracted away from auto-discovery processes.

APIs should be available to obtain metadata regarding data movements through the cloud environment. Such metadata covers movement through data tiers, between availability zones and between geographies. Also, organizations should have an end-to-end view of data lineage, typically made possible by stitching or aggregating lineage information from multiple cloud services.

Questions

- Does automatic lineage discovery identify processes that move data across jurisdictions, availability zones and physical boundaries?

- Does automatic lineage discovery identify data movement between hybrid and multiple cloud environments?

- Have processes for reviewing the auto-discovered data lineage information been defined and implemented?

Artifacts

- Artifacts Lineage Discovery Log – demonstrating automated lineage discovery events, lineage review process and review outcome

- Data Catalog Report – demonstrating the recording of lineage information as metadata

- Lineage Reports – including data movement across jurisdictions, availability zones and physical boundaries, and movements between hybrid and multi-cloud environments

Scoring

Not Initiated

No formal automated multi-environment discovery of data lineage exists.

Conceptual

No formal automated multi-environment discovery of data lineage exists, but the need is recognized, and the development is being discussed.

Developmental

Formal automated multi-environment discovery of data lineage is being developed.

Defined

Formal automated multi-environment discovery of data lineage is defined and validated by stakeholders.

Achieved

Formal automated multi-environment discovery of data lineage is established and adopted by the organization.

Enhanced

Formal automated multi-environment discovery of data lineage is established as part of business-as-usual practice with continuous improvement.

6.2.2 Data lineage changes are tracked and managed

Description

The movement of data along a supply change from source to consumption will change as changes to applications, data assets and environments are implemented. Changes to the lineage of in-scope data must be tracked and managed for issue investigation, compliance with regulatory requirements and auditing.

Objectives

- Gain approval and adopt data lineage change management policy, standards and procedures that apply consistently across on-premises and cloud environments.

- Ensure that data lineage changes are identified and recorded.

- Record metadata that enables historic data lineage to be accurately reported.

- Enable changes in data lineage to be associated with the underlying business and technology change events.

Advice for Data Practitioners

Data Practitioners should begin by identifying roles and responsibilities for data lineage tracking. The next major step is to document the standardized data lineage tracking, version tracking and change management for the organization. It is also important to ensure that the tracking and change management policy, standard and procedure document the balance of responsibilities between the organization and the cloud service and technology providers.

Validate data elements and ensure data lineage accountability

Practitioners should define the scope of data lineage tracking and accountability within the organization. It is also necessary to define a data lineage change management policy that establishes data lineage accountability on all platforms at appropriate levels of granularity—including on-premises and cloud environments.

Next, practitioners should establish processes to monitor data lineage changes and track and alert on data lineage changes—according to organization policy and data sharing agreements.

Automation

Wherever practicable, employ automation to both record and access data lineage changes and versions. Automation can strongly support broader accessibility with a secure URL that is easily distributed to various users. It is easier to scale operations by capturing many relationships and users that need concurrent access lineage information. Automation also enhances the ability to track data lineage versions, manage workflows, keep an audit trail, permit concurrent edits from multiple users and prevent the distribution of multiple versions. Ensure that data lineage change tracking procedures cover events in which manually recorded changes will override automatically recorded lineage.

Advice for Cloud Service and Technology Providers

Cloud service and technology providers should provide the capability to record data lineage changes and data processing pipeline changes (such as tagging changes for versioning for data processing pipeline releases). It is also important to offer the ability to trigger workflows supporting the organization’s data lineage change processes. Where applicable, providers should offer a change management repository for recording data lineage changes. In addition, providers should offer the ability to access data lineage metadata history to support reporting and visualization of historical lineage for audit purposes.

Data lineage and lineage change history should be readily available in common standard formats. Refer to CDMC Information Model. Providers should present data lineage change management features, interfaces and functionality in clear, accessible documentation.

Questions

- Have policy and procedures for data lineage change management been defined?

- Has accountability for data lineage change management been established across both cloud and on-premises environments?

- Is data lineage change metadata identified recorded?

- Is data lineage history accurately reportable from recorded metadata?

- Are data lineage changes linked to underlying business and technology change events?

Artifacts

- Data Management Policy, Standard and Procedures – defining and operationalizing data lineage change management

- Data Lineage Change Log – recording accountability for data lineage changes, recording lineage change metadata and linking to business and technology changelogs

- Data Lineage History Reports – generated from recorded metadata

Scoring

Not Initiated

No formal data lineage tracking and change management exist.

Conceptual

No formal data lineage tracking and data processing pipeline change management exist, but the need is recognized, and the development is being discussed.

Developmental

Formal data lineage tracking and data processing pipeline change management are being developed.

Defined

Formal data lineage tracking and data processing pipeline change management are defined and validated by stakeholders.

Achieved

Formal data lineage tracking and change management is established and adopted by the organization.

Enhanced

Formal data lineage tracking and data processing pipeline change management are established as part of business-as-usual practice with continuous improvement.

6.2.3 Data lineage reporting and visualization are implemented

Description

In data lineage reporting and visualization, data lineage metadata is presented in forms that can be analyzed and explored to understand data movement from data producer to data consumer. Understanding the data flow is essential for an organization to assess data provenance, perform root cause analysis and impact assessments, validate data integrity and verify data quality. In a cloud, hybrid-cloud, or multiple cloud environments, it is important that users can know the origin, movement and use of the data that resides in the cloud environment. Visualization of captured data lineage data is a critical capability for comprehensive data management in a cloud environment.

Objectives

- Document and gain approval on data lineage reporting and visualization requirements, including requirements for granularity and metadata augmentation and labeling.

- Implement functionality to generate lineage visualizations automatically from authoritative sources of lineage metadata.

- Provide the ability to augment lineage visualizations with additional metadata, such as data quality metrics and data ownership.

- Ensure that lineage reports and visualizations provide complete point-in-time histories of key activities.

- Ensure that lineage is represented consistently across different reporting and visualization tools and different lineage discovery methods.

- Gain approval and adopt data lineage reporting and visualizations access policy, standards and procedures that apply consistently across on-premises and cloud environments.

Advice for Data Practitioners

Data lineage metadata becomes actionable through data reporting and visualization. Consumers of data lineage visualizations include data owners and data stewards, who use these reports and visualizations to examine and understand lineage flowing across the boundaries of multiple business units and functions. Consequently, they must be trained and educated to read and interpret data lineage reports and apply them to various business use cases. Data practitioners must ensure that the data lineage reports and visualizations are clear, accurate, timely and readily understood.

Data lineage reporting and visualization must always present the correct levels of lineage granularity. For summaries, data lineage reports and visualizations need to provide visibility into the business systems and the data that interact with those systems before reaching their destination. In detail, the reports and visualizations should provide the details of fields, transformations, historical behavior, and attribute properties for the data on its journey through the enterprise data ecosystem. The visualization and reporting capabilities must rely on a comprehensive data set, which means collaboration is crucial among the various systems administrators, business groups and department silos.

Data lineage reporting and visualization should be always-on, employing services and functionality that ensure the availability and recoverability of the reporting systems in case of outages and system faults. Visualization of data lineage can help business users spot the connections in data flows and thereby provide greater transparency and auditability of the data within the ecosystem.

Advice for Cloud Service and Technology Providers

Cloud service and technology providers must provide the features and tools necessary to support data lineage reporting and visualizations—across cloud, hybrid-cloud, and multiple cloud environments. To achieve this, the provider must support open lineage data models and API interfaces to enable an organization to connect and automate lineage data coming from standardized or bespoke sources.

Cloud environment tools and capabilities must enable business users to explore data lineage metadata directly. It is important to realize that data lineage reports and visualizations are necessary to explore technical and business lineage data. For example, technical lineage shows data movement from a file in a cloud environment to a table in the analytical platform. Data stewards maintaining transformation rules of this data movement also need to know what business domains are affected by any upstream changes. The ability to toggle between the technical data flow lineage and business impact lineage will enable data stewards to change data transformation rules confidently and communicate with affected parties.

Finally, data lineage reporting and visualization tools should present historical changes to the data movement processes. These tools should offer the ability for the user to recreate the lineage flow back to a specific point in time for audit and evidence collection. In addition, the tools should provide the ability to compare versions and highlight changes among versions—which helps evaluate impact to the systems through which the data flows.

Questions

- Have lineage reporting and visualization requirements been documented and approved?

- Do lineage reporting and visualization requirements include requirements for granularity and metadata augmentation and labeling?

- Can lineage visualizations be generated automatically from authoritative sources of lineage metadata?

- Can lineage visualizations be augmented with additional metadata such as data quality metrics and data ownership?

- Can lineage reports and visualizations provide complete point-in-time histories of key activities?

- Is lineage represented consistently across different reporting and visualization tools?

- Is lineage represented consistently regardless of the discovery method used to collect the lineage metadata?

Is access to data lineage reporting and visualizations granted according to defined policy and procedures?

Artifacts

- Lineage Reporting and Visualization Requirements – including requirements for granularity and metadata enrichment

- Lineage Reporting and Visualization Catalog – detailing the granularity and metadata enrichment supported by each solution

- Lineage Reports – including copies of visualizations

- Data Management Policy, Standard and Procedure – defining and operationalizing granting access to data lineage reporting and visualizations

Scoring

Not Initiated

No formal lineage reporting and visualization exists.

Conceptual

No formal lineage reporting and visualization standard exists, but the need is recognized, and the development is being discussed.

Developmental

Formal lineage reporting and visualization are being developed.

Defined

Formal lineage reporting and visualization are defined and validated by stakeholders.

Achieved

Formal lineage reporting and visualization are established and adopted by the organization.

Enhanced

The formal lineage reporting and visualization is established as part of business-as-usual practice with continuous improvement.

6.3 Data & Technical Architecture – Key Controls

The following Key Controls align with the capabilities in the Data & Technical Architecture component:

- Control 13 – Data Lineage

- Control 14 – Cost Metrics

Each control with associated opportunities for automation is described in CDMC 7.0 – Key Controls & Automations.

Key Control 13

| Control 13: Cost metrics | |

Component |

6.0 Data & Technical Architecture |

Capability |

6.1 Technical Design Principles are Established and Applied |

| Control Description |

Cost Metrics directly associated with data use, storage, and movement must be available in the catalog. |

| Risks Addressed |

Costs are not managed, detrimentally impacting the commercial viability of the organization. |

| Drivers / Requirements |

As the cloud changes the cost paradigm from Capex to Opex, organizations require additional visibility on where data movement, storage and usage costs are incurred. Poor data architectural choices concerning data placement can incur additional costs through ingress or egress costs. For example, extra compute costs will be incurred when running data warehouse workloads on OLTP infrastructure. |

| Legacy / On-Premises Challenges |

Limited need to manage data processing or storage costs at a data asset level. There is no line-item costing on the assets in a data catalog, so organizations cannot run a cost-analysis to understand where their data management costs are specifically being incurred. |

| Automation Opportunities |

|

| Benefits |

Data owners would be able to understand who is using what data, the frequency of that access and the cost incurred to provide that data. |

| Summary |

The financial operations infrastructure of cloud service providers is robust enough to identify accounts and operations that are incurring costs and associating those costs to specific data assets as line items in the data catalog. |

Key Control 14

| Control 14: Data Lineage | |

Component |

6.0 Data & Technical Architecture |

Capability |

6.2 Data Provenance and Lineage are Understood |

| Control Description |

Data lineage information must be available for all sensitive data. This must at a minimum include the source from which the data was ingested or in which it was created in a cloud environment. |

| Risks Addressed |

Data cannot be determined as having originated from an authoritative source resulting in a lack of trust of the data, inability to meet regulatory requirements, and inefficiencies in the organization's system architecture. |

| Drivers / Requirements |

Organizations need to trust data being used and confirm that it is being sourced in a controlled manner. Regulated organizations produce lineage information as evidence that the information on regulatory reports has been taken from an authoritative source for that type of data. Consumers of sensitive data must be able to evidence sourcing of data from an authoritative source, for example, by showing lineage from the authoritative source or providing the provenance of the data from a supplier. |

| Legacy / On-Premises Challenges |

Lineage information is produced manually by tracing the flow of data through systems from source to consumption. The cost of this approach and the consequences of producing incorrect data can be significant. |

| Automation Opportunities |

|

| Benefits |

Easy to produce evidence of the data lineage for regulatory reports. Major financial organizations incur significant costs producing this information manually and retrospectively. |

| Summary |

Automatically tracking lineage information for data that feed regulatory reports would streamline the reports' data and eliminate cost by replacing the manual labor required to produce that information. |